So, picture this: You’re at a party, and someone’s bragging about their latest study. They throw around terms like “95 percent confidence interval” like confetti, and everyone nods along, pretending to get it. But inside, you’re secretly thinking: what on Earth does that even mean?

Well, you’re not alone! Confidence intervals can sound all fancy and intimidating, but they’re really just a way to show how sure researchers are about their estimates. Like when someone says they’re 95 percent sure they’ll get the pizza order right—there’s still that chance they screw it up!

The thing is, understanding these intervals can totally change the way you look at research. It’s not just about numbers; it’s about trust. So let’s unravel this thing together and make sense of the math behind the claims people throw around. Sound good?

Understanding the 95% Confidence Interval in Scientific Research: Implications and Interpretations

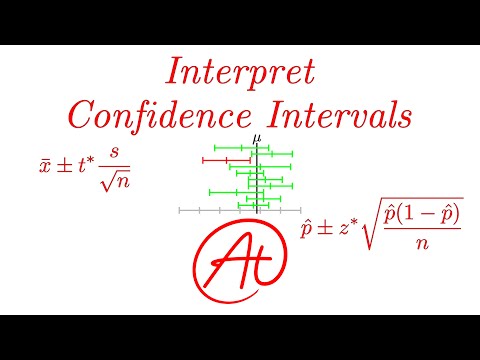

Understanding the 95% Confidence Interval in scientific research can feel a bit like trying to decipher a secret code. But I promise you, once you get the hang of it, it’s not that complicated. Basically, when researchers say they have a 95% confidence interval for their results, they’re indicating the range within which they believe true values—like means or proportions—will fall.

So, let’s break this down a bit more. A confidence interval is essentially a way to express uncertainty around an estimate. Imagine you toss a coin 100 times and get 55 heads and 45 tails. That gives you an estimate of the probability of getting heads as 0.55. Now, if you calculate a 95% confidence interval, say from 0.50 to 0.60, it means that if you were to repeat this coin-tossing experiment many times, around 95 out of every 100 confidence intervals you construct would likely contain the true probability of getting heads.

Now here’s where it gets interesting—and slightly tricky! The confidence interval doesn’t tell you there’s a 95% chance that your specific interval (like that one from the coin toss example) contains the true probability; rather, it’s about how often this method will produce intervals that do include the true value if you repeated your experiment countless times.

You might be wondering why this matters in real life. Well, think about medical research trying to find out how effective a new drug is for lowering blood pressure. If they say the drug reduces blood pressure with a 95% confidence interval from -10 mmHg to -5 mmHg, it suggests that they are pretty sure (like really sure) that in the population being studied, actual blood pressure reductions lie somewhere between those two numbers.

Let’s cover some key points on interpreting these intervals:

- Narrow vs Wide: A narrow interval indicates more precision in your estimate, while a wide one suggests more uncertainty.

- Doesn’t Guarantee Reality: Just because your confidence interval doesn’t include zero doesn’t mean there’s an effect—other factors could be at play.

- Not About Individual Outcomes: It reflects population estimates—not individual cases!

- P-value Connection: If your p-value (which tests statistical significance) is below .05 and your CI does not cross zero, that’s usually seen as strong evidence.

Now picture this: It was back in college when I first heard about confidence intervals during my stats class. My professor handed out candies—yeah, candies! He said we’d each pick some at random and then guess how many were red ones in total based on our sample. The point wasn’t just math; it was realizing how bounding our guesses gave us insights into what we might expect overall.

To sum up: understanding the 95% confidence interval allows researchers (and folks like us) to grasp how reliable their findings are without getting lost in complex numbers or jargon. It helps us understand not just what we found but also how much we can trust those findings going forward! So remember next time someone throws around that term—it’s all about figuring out where reality lies within those ranges!

Understanding the Interpretation of 95% Confidence Intervals in Scientific Research

Confidence intervals can sound super intimidating, but honestly, they’re not as complex as they seem. Let’s break down the idea of a 95% confidence interval. Basically, when researchers say they have a 95% confidence interval for a certain measurement—say, the average height of a group of people—they’re saying if they were to repeat their study 100 times, about 95 of those times the true average would fall within that range.

So here’s how it works: imagine you measure the heights of, oh I don’t know, let’s say 30 friends. You take all those measurements and calculate an average height. Now, based on that data and some statistical calculations, you find that your 95% confidence interval for the average height is between 5’5” and 5’9”. This means that you’re pretty confident (like really confident) that if you could somehow measure every single person in the same demographic, their average height would land somewhere between those two numbers.

One thing to keep in mind is that confidence intervals give you an idea of precision. A very narrow interval indicates more precise estimates. For instance, if your confidence interval for those heights was only from 5’7” to 5’8”, you’d feel even better about your estimate. But if it’s wide—let’s say from 5’2” to 6’2”—it suggests uncertainty in your data. So yeah, width matters!

Now let’s talk about what “confidence” actually means here. It doesn’t mean you’re guaranteeing the true value is within your range every time; it’s more like a degree of certainty based on repeated sampling. So don’t confuse it with being absolutely sure! Like if I said my favorite pizza place is always good—that’s more subjective than scientific.

Also, context counts. A confidence interval shouldn’t be interpreted in isolation. It’s crucial to consider other aspects like sample size and variability in the data. If your sample size is tiny or super diverse, then your interval might not be very reliable at all.

There’s also this concept called “overlapping intervals.” You might come across studies where different groups compare their findings using confidence intervals. If two groups’ intervals overlap quite a bit? It suggests their results may not be significantly different from each other—essentially taking some steam out of any big claims they’re making about differences.

And on a side note: just because an interval does not include zero doesn’t automatically make something significant either! It’s all about context again; you really need to dive into the specifics behind what you’re studying.

So basically, understanding confidence intervals helps us grasp how reliable our estimates are in scientific research. They help researchers communicate uncertainty clearly—and knowing how to interpret them can save us all from a whole lot of confusion down the road!

And hey—next time someone mentions a confidence interval at dinner? You’ll totally impress them with this knowledge!

Understanding 95% Confidence Intervals: A Scientific Approach to Data Interpretation in Research

Confidence intervals can seem like one of those tricky concepts in research that are hard to grasp at first. Like, you might be reading a study and suddenly get hit with “95% confidence interval,” and you’re left wondering what it means. No worries, I’ve got you covered. Let’s break it down nice and simple.

So, to start off, a **95% confidence interval** is a way for researchers to express how certain they are about their estimates from data. Imagine you have a jar full of jellybeans, and you want to figure out how many jellybeans are in there without counting every single one. You take a handful out, count them, then guess the total in the jar based on that handful. That guess comes with some uncertainty—and that’s where confidence intervals come into play!

When we say “95% confidence,” it means if we were to repeat this study over and over again—like taking many handfuls of jellybeans—the interval we calculate will cover the true number 95 out of 100 times, or almost all the time. So basically, you can think of it as a safety net for your estimate.

Imagine this: Say in one study, researchers estimate that the average height of a group of teenagers is 160 cm with a 95% confidence interval ranging from 155 cm to 165 cm. This tells you that they’re pretty sure (like really sure) that if they could measure every teenager in the group, the true average would fall somewhere between those two numbers.

Now let’s dive into some key points about these intervals:

- Wider vs. Narrower: A wider confidence interval means more uncertainty about your estimate. It’s like being less confident about that jellybean count because your handful was way off.

- Sample Size Matters: The more jellybeans (or samples) you grab out of the jar, the tighter your confidence interval gets! Bigger samples lead to more reliable estimates.

- Not an Absolute: Just because an interval doesn’t include zero (for example), doesn’t mean something significant is happening—it just reflects what data shows.

But here’s something interesting: people sometimes misunderstand these intervals! It doesn’t mean there’s a 95% chance that any particular value lies within that range once you’ve calculated it; instead, it’s about many tests or studies would end up showing similar ranges.

Let me throw another idea at you. Say researchers find a new drug shows promise for reducing headaches. They report an average reduction in pain with a confidence interval from -1 to -3 points on some scale used for pain measurement. What this implies is pretty cool! They’re saying if they keep studying this drug on different people over time repeatedly, in most cases (95% chance), they’d find headache relief somewhere between 1 and 3 points.

Lastly, while analyzing these results sounds all serious business—having fun with numbers often helps them become less intimidating! Think of interpreting confidence intervals as unraveling mysteries instead of crunching dry data.

Understanding something like **confidence intervals** can totally flip how you view scientific studies and research findings in general! And who knows? Maybe next time someone hits you with stats in conversation—you’ll impress them by explaining it like you’d just done some serious math homework!

So, you’ve probably heard someone throw around the phrase “95 percent confidence interval” in a research paper or maybe even in a casual chat about statistics. At first glance, it can sound super technical and maybe even a little intimidating. But it’s pretty essential if you want to get your head around how researchers say they know what they know.

Imagine you’re at a carnival, trying to guess how many jellybeans are in this giant jar. You take a handful and count them—let’s say you grab 50 beans. Based on those 50, you might guess the jar has about 200 jellybeans total. But here’s where it gets interesting: You could actually be way off! That’s where confidence intervals come into play.

When researchers calculate a 95 percent confidence interval, they’re trying to give us an estimate of where they think the true value lies based on the data they collected. Basically, they’re saying, “Hey! If we were to do this study over and over again with different samples, we would expect the true average to fall within this range about 95 times out of 100.” So it’s not just about having that precise number; it’s more like giving us a safety net around their best guess.

But here’s my thing with confidence intervals: They can be kind of misunderstood. People sometimes think that there’s only a 5 percent chance that the true value falls outside that range—like it’s some sort of magical boundary that nothing can cross. It can feel misleading if you don’t dig into what those numbers really mean.

I once sat through a seminar where someone presented their findings on diet and exercise benefits with an impressive confidence interval. On paper, it looked solid! But when we talked with them afterward, they admitted that their sample size was small and not very diverse. That threw me for a loop because suddenly those numbers didn’t feel as rock-solid as I’d initially thought. It reminded me how important it is to consider context—the bigger picture behind those stats.

And let’s be real; there are also cases when these intervals might overlap significantly between different studies, leading to confusion about what conclusions we should draw from them. It makes me wish research papers included more discussion on these nuances rather than just tossing out numbers while expecting everyone to get on board with their interpretations.

So next time you’re sifting through research or hear someone mention that magic number—95 percent—take a moment to ponder what it really means beyond just stats on a page. It’s all about being curious and questioning the assumptions behind those figures. Sure, numbers have power—but understanding them? That’s where the real magic happens!