You know that moment when your computer does something you didn’t even ask it to? Like, it just knows what you want before you do? That’s the magic of machine learning!

Picture this: I once asked my phone for directions to a coffee shop, and it suggested a place I’d never even heard of. I ended up loving it! That’s machine learning at work—analyzing data and making predictions, like some kind of digital mind reader.

Now, let’s sprinkle in Python. This programming language has become the go-to buddy for scientists tackling everything from climate change to healthcare innovations. Seriously, it’s like giving your brain a turbo boost for solving complex problems.

So, if you’re curious about how this duo—Python and machine learning—is pushing the boundaries of scientific innovation, stick around. It’s a wild ride full of discovery, creativity, and maybe a little magic too!

Understanding the 80/20 Rule in Python: A Scientific Approach to Data Analysis and Optimization

So, you’ve probably heard of the 80/20 Rule, also known as Pareto Principle. Basically, it suggests that *80% of your results come from 20% of your efforts*. Crazy, right? This concept is all over the place, from productivity hacks to economics. But what about in the world of Python and data analysis? Let’s break it down.

When you’re working with data in Python, especially in machine learning and scientific innovation, applying this rule can really help optimize your performance. The idea is to focus on that critical 20% of inputs that deliver the most significant outcomes.

Let’s say you’re building a model to predict something cool, like whether a tomato will taste sweet or sour. You gather loads of data: temperature, sunlight hours, soil pH level—you name it! But here’s where the magic happens: after running some analyses, you discover that just two features—temperature and soil pH—account for 80% of your model’s accuracy. If you spend too much time tweaking other factors that don’t really change your results much, you’re wasting effort.

- Prioritize Features: Identifying which features are crucial lets you streamline your dataset. This means less data processing time and faster training for models.

- Optimize Resources: Instead of pouring resources into every little detail like cleaning up every tiny anomaly in your dataset (which can be a pain!), focus on larger trends or patterns.

- Faster Iteration: If you know what truly matters early on, you can iterate faster on your model’s design and parameters.

You know how frustrating it can be when you’re stuck trying to make sense of an avalanche of data? That’s what I feel when I remember my college days digging through spreadsheets—if only I had focused on the most impactful variables instead! Seriously though, this approach could’ve saved me hours.

But let’s not forget about computational resources—the 80/20 Rule isn’t just for feature selection but also for model evaluation. Imagine if 20% of tests were running super slow while covering most cases; optimizing those can reduce run times drastically. By focusing on those key tests, you keep everything moving smoothly.

You might wonder how to apply this practically using Python libraries like Pandas, Numpy, or even SciKit-Learn. Well:

- Pandas: Use it to quickly filter out those critical features with commands like `df[[‘temperature’, ‘soil_pH’]]` to zero in on what’s essential.

- Numpy: It helps with mathematical computations that highlight correlations—easier insights into which features drive performance!

- SciKit-Learn: Implement feature importance metrics available in tree-based models (like Random Forests) which reveal which inputs are game changers for your algorithm.

The 80/20 Rule is all about working smarter—not harder—with your data analysis in Python. When you’re mindful about where you invest time and resources in machine learning tasks, it’s amazing how much more efficient and effective your work becomes!

This principle isn’t some abstract concept; it’s a practical tool for everyday problem-solving within scientific innovation. So next time you’re analyzing data or squeezing algorithms into some tight space within Python code, remember: focus on the impactful bits! It could save you significant headaches down the road!

Unlocking the Power of Python: The Essential Language for AI, Machine Learning, and Scientific Computing in Modern Science

Python is like that friend who can do everything—seriously! When it comes to AI, machine learning, and scientific computing, it’s become the go-to language for many researchers and developers. Let’s break down why Python is so powerful and how it fits into the landscape of modern science.

First off, it’s super user-friendly. Python’s syntax is clean and straightforward, making it easy to read and write. This has a lot to do with its popularity in academia. For example, if you’ve ever seen a piece of code that looks like a puzzle with weird symbols, you’d appreciate how Python keeps things simple. You could jump in without feeling overwhelmed.

Now, let’s talk about libraries. Python boasts a ton of them that are designed for specific tasks—some real heavy hitters! Libraries like Pandas help with data manipulation, NumPy is great for numerical calculations, and Matplotlib brings your data visualizations to life. Imagine trying to handle complex data without those tools—it would be like asking someone to build IKEA furniture without the manual!

Then we have machine learning. Libraries like TensorFlow and Keras are game-changers. They allow you to build neural networks in a fraction of the time it would take using other programming languages. For instance, let’s say you’re working on understanding patterns in huge sets of medical data; you could leverage these libraries to create predictive models quickly.

And guess what? The community support is massive! If you run into an issue or need advice on best practices, there’s no shortage of forums or documentation available online. It’s like having an entire library at your fingertips where everyone’s willing to help out.

Another cool aspect is how adaptable Python is across different platforms. Whether you’re on Windows, macOS or Linux, you can run your scripts seamlessly. This flexibility makes collaborating across teams much easier because everyone can work in their preferred environment.

But wait—there’s more! Pythons’ use extends beyond just coding. It also plays well with other languages like C++ for performance-critical tasks. So if you’ve got some heavy lifting to do under the hood, you’re not locked into just using Python.

Now let’s get emotional here for a second: consider how this programming language has opened up opportunities for innovation in scientific research. Think about climate change models or predicting disease outbreaks—Python allows scientists to analyze vast amounts of data quickly and efficiently. It’s actually changing lives!

So basically, if you’re looking at diving into AI or machine learning projects in science nowadays, picking up Python isn’t just helpful—it’s essential! In this fast-paced world of technology and research advancements, staying versatile matters more than ever.

In summary:

- User-friendly syntax: Easy to learn and read.

- A plethora of libraries: Specific functionalities at your fingertips.

- Mighty machine learning support: With TensorFlow and Keras leading the pack.

- An active community: Tons of resources available whenever you need help.

- Cross-platform adaptability: Works where you want it!

So there you have it! Python isn’t just a tool; it’s become an integral part of modern scientific innovation—like having your Swiss Army knife for tackling all sorts of challenges.

Exploring Python’s Role in Advancing Machine Learning Applications in Science

Python has become a superstar in the world of machine learning and scientific innovation. Seriously, it’s like the cool kid in class that everyone wants to hang out with. Why? Well, its simplicity and versatility make it a favorite among scientists and programmers alike.

First off, Python’s easy syntax is a huge plus. You don’t need to be a coding wizard to write decent code. It’s straightforward, so you can focus more on solving scientific problems rather than wrestling with complicated programming languages. Imagine trying to explain complex concepts without getting lost in technical jargon—Python helps you do just that!

When it comes to machine learning, Python has an arsenal of powerful libraries at its disposal. Libraries like TensorFlow, Keras, and scikit-learn provide ready-to-use tools for building models quickly and efficiently. If you want to train a model to recognize patterns in data, these libraries have your back. They’re built for speed, making it easier to iterate and improve your projects.

Also, let’s talk about data handling. The world of science generates massive amounts of data every day—from climate change studies to genomic sequences. Python shines here too. With libraries like Pandas for data manipulation and Numpy for numerical computations, pouring over those datasets feels less overwhelming. You can easily clean, analyze, and visualize data—all key parts of the scientific process.

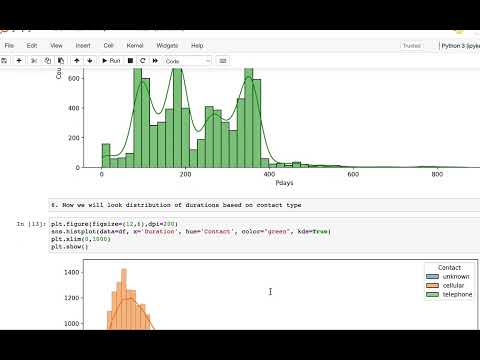

Visualization? Python nails that as well! Using libraries like Matplotlib and Seaborn, you can create stunning charts that help bring your findings to life. Picture this: you have tons of results from an experiment on climate patterns. Instead of throwing numbers at people, why not show them a clear graph? It makes the data much more digestible.

Plus, the community support is incredible! There are forums where you can ask questions or find answers—kind of like having a study group right there whenever you need help! Open-source contributions mean there’s always someone tweaking and improving tools that everyone can use.

On top of all of this, Python is ideal for collaboration across different sectors in science—like biology teaming up with computer science or physics with engineering. Its flexibility allows researchers from varied backgrounds to work together without having to learn a whole new language first.

You know what made me realize how transformative this is? A friend told me about their research on predicting protein structures using machine learning in Python. They built their model from scratch using TensorFlow and were able to run simulations way faster than before! That’s some serious innovation right there!

In short, when we think about advancing machine learning applications in science, Python stands out as the tool that helps bridge gaps between disciplines while making coding accessible for all levels of expertise—like giving everyone keys to unlock new discoveries together! So yeah, whether you’re analyzing vast datasets or building sophisticated algorithms, Python’s got something awesome for every scientist out there looking to innovate!

You know, Python has really become the go-to language for machine learning, especially in the realm of scientific innovation. It’s kind of amazing how much it has changed the game. Just think about it: a few years ago, if someone said you could train a computer to analyze data and even make predictions about things like disease outbreaks, people would’ve looked at them like they were nuts. But now, because of Python and its incredible libraries, that’s totally a reality.

I remember this one time when I was chatting with a friend who’s working on climate change research. She mentioned how they used Python to crunch through mountains of data about temperature fluctuations and carbon emissions. It felt like magic when she described how quickly they were able to spot trends that could influence policy decisions! It’s not just number crunching; Python makes it so much easier to visualize data too! You can see patterns in ways that are just more intuitive than staring at spreadsheets.

Now, let’s talk about those libraries—like NumPy and pandas—they’re like the superheroes in this whole story. They allow scientists to manipulate large datasets without breaking a sweat. Then there’s TensorFlow and PyTorch for building machine learning models. You can literally train your model on your laptop and test how well it predicts things without needing some insane supercomputer! That accessibility is revolutionary.

But here’s the thing: with great power comes great responsibility. Machine learning models can sometimes be black boxes, which means you might not really understand how they come up with their predictions. This is where ethical considerations come into play—a topic that’s super important right now. Scientists have to be careful about how they use these tools, especially in sensitive areas like healthcare or environmental protection.

So yeah, Python is changing the way science gets done—fast! And while it’s exciting to see all these advancements being made possible by technology, we’ve got to remember that along with innovation comes the need for thoughtful consideration on how we use it all. It’s pretty wild when you think about where we’re headed!