So, picture this: you’re scrolling through your phone, checking out memes. You come across a hilarious video your friend sent you. It’s like 10 seconds of pure gold, but it’s 200 MB?! Seriously? You almost hesitate to hit play because it might take forever to load.

Now, imagine if you could shrink that video down to size without losing any of the funny bits. Enter data compression—like magic for digital stuff! And that’s where we get to this cool thing called autoencoders in PyTorch.

You don’t need to know all the nitty-gritty details yet, but just think about it. Autoencoders can help squeeze data into tiny packages! It’s like packing a suitcase for a trip—how much can you fit without leaving behind your favorite shoes?

In this chat, we’ll explore some neat innovations in using autoencoders for data compression. There’s a lot going on behind the scenes, and trust me, it’s worth diving into. So hang on tight as we unpack this exciting world together!

Exploring Innovations in Data Compression Using PyTorch Autoencoders: Insights and GitHub Resources

Alright, let’s talk about data compression and how PyTorch autoencoders are shaking things up in that space. Imagine you have a giant suitcase, but you only want to take a few outfits on your trip. You need to pack efficiently, right? That’s kind of like what data compression does with information—the goal is to reduce the size of data while keeping its value intact.

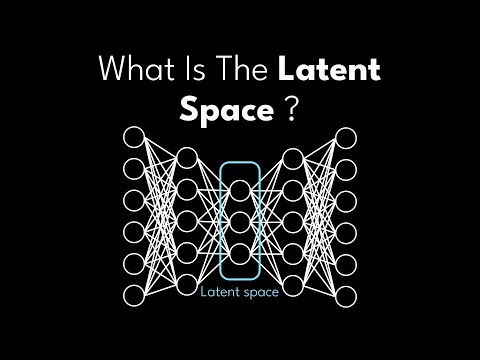

Now, autoencoders are a type of neural network that can help with this process. They work by first compressing your input (like squishing your clothes) into a smaller representation, and then decompressing it back (unpacking those clothes). This way, you get to keep all the important stuff without carrying the weight of unnecessary items.

Pytorch is an amazing tool for building these autoencoders because it gives you flexibility and power. With just a few lines of code, you can set up an autoencoder model. Let’s break down a couple of key points here:

- Encoding and Decoding: The first part is the encoding layer that shrinks the data down. It learns to focus on the most important features, ignoring the fluff.

- Training: You train the autoencoder using original data, so it learns how to recreate it from this compressed version. It’s like practicing how to pack better!

- Applications: Autoencoders aren’t just for images; they can compress audio files or even text! Think about how much easier it would be if we could send huge files faster because they’re just smaller versions of themselves.

This innovation opens up tons of possibilities in fields like machine learning and big data analysis. But hey, I know what some of you might be thinking: “This sounds complicated!” Well, I promise it’s not as scary as it seems!

If you’re looking to get your hands dirty with this tech, there are numerous resources on GitHub where folks have shared their cool projects involving PyTorch autoencoders. Some even provide pre-built models that can save you time! You’ll find examples showing everything from basic compression techniques to more advanced methods that tackle challenging datasets.

Anecdote Time: A friend of mine once tried sending a bunch of high-res photos from his vacation via email. Each photo was huge! After realizing he could barely send two before hitting limits, he switched gears and used an autoencoder he’d learned about online. Not only did he manage to send all his pictures at once but they looked great too! It was such a relief for him—and pretty amazing what tech can do!

The bottom line here? Innovations in data compression using PyTorch autoencoders are powerful tools for handling our ever-growing amounts of information effectively. Whether you’re diving into machine learning or just curious about tech advancements, this area is worth exploring!

Advancements in Data Compression Techniques Using PyTorch Autoencoders: A Comprehensive PDF Guide

Sure! Let’s chat about advancements in data compression techniques, especially those using PyTorch autoencoders. It’s a hot topic, you know?

First off, let’s break down what an **autoencoder** is. Basically, it’s a type of artificial neural network that’s trained to replicate the input data. You feed it data, and it learns how to compress that data into a smaller representation while still being able to reconstruct the original input from that compressed version. Kinda cool, right?

So, with **data compression**, you’re trying to save space while keeping as much info as possible. Think of it like folding your clothes neatly so you can fit more in your suitcase without losing anything important.

Now, when we talk about advancements in this field using **PyTorch**, we’re talking about tools and frameworks that make it easier to work with these autoencoders. PyTorch is pretty popular for deep learning because it’s user-friendly and super flexible.

Here are some key points about these advancements:

- Improved Architecture: Recent trends have shown enhancements in the architecture of autoencoders. Like adding convolutional layers which help capture spatial hierarchies in the data.

- Variational Autoencoders (VAEs): These take things a step further by introducing randomness into the encoding process. They learn probabilities instead of fixed representations, which can lead to better generalization.

- Loss Functions: The choice of loss functions has evolved too. Instead of just relying on Mean Squared Error (MSE), researchers are experimenting with perceptual loss functions that better capture human-like perceptions.

- Scalability: Thanks to improvements in hardware and better software optimization, training larger models on massive datasets has become more feasible. This means you can compress even bigger sets of data without losing quality.

- Transfer Learning: Some new methods use pre-trained models as starting points. This can save time and improve efficiency since you’re building on something solid rather than starting from scratch.

Let me share a quick story: I once attended this tech meetup where someone presented their project using an autoencoder for image compression in medical imaging. They were able to shrink down MRI scans significantly without losing vital details! The doctors loved it because it saved them storage space while still providing clear images for diagnosis.

About practical applications—like think of how we watch movies or listen to music online! Streaming services rely heavily on good compression techniques so you can enjoy high-quality content without waiting ages for stuff to load.

In conclusion—or should I say wrapping this up—autocompressors using PyTorch are changing the game through innovation after innovation. They’re making it easier and quicker to handle large datasets while keeping the essence intact! And hey, new discoveries keep popping up all the time!

Exploring Convolutional Autoencoders in PyTorch: Advancements in Deep Learning for Scientific Applications

Alright, let’s get into this whole world of convolutional autoencoders, especially in the context of PyTorch and how they’re shaking things up in deep learning. So, what’s the deal with these fancy-sounding terms? Basically, convolutional autoencoders are a type of neural network that helps us compress data. It’s kind of like when you squish your clothes into your suitcase to make them fit better. You know?

First off, what’s an autoencoder? An autoencoder is a special kind of neural network that learns to copy its input to the output but does it through a compressed representation in the middle layer. Imagine your brain encoding a funny memory and storing it neatly so you can recall it later! That middle part is called the “bottleneck,” and it’s where all the magic happens.

Now, when it comes to convolutional aspects, we’re talking about layers that process data using filters. These filters slide over input data (like images) to pick out features—kind of like how you can spot your friend in a crowd from their unique hair color or t-shirt! This means convolutional autoencoders are super effective at understanding images.

And here’s where PyTorch comes onto the scene. It’s a framework that makes building and training these networks easier and more intuitive. You can think of it as your toolbox—super handy for building complicated stuff without getting lost in pointless details.

So why do scientists care about this? Well, data compression is huge! When dealing with massive datasets (like those from satellites or genetic sequencing), it gets really unwieldy. Scientists need to save space but also retain important information for analysis later on.

A couple points to keep in mind:

- Simplicity: Autoencoders reduce dimensionality effectively while keeping essential patterns intact.

- Feature Extraction: They help identify key features automatically without needing manual selection.

- Anomaly Detection: They’re useful for spotting oddities in data since they understand what “normal” looks like.

Let me tell you about an instance I stumbled upon recently. A team was using convolutional autoencoders for analyzing medical images. By compressing the images first, they managed to speed up diagnosis times significantly by not losing crucial details in those tiny details doctors need to see before making life-changing decisions.

Now, if you’re following along, you’re probably wondering how all this actually works under-the-hood with PyTorch. Well, here’s a quick rundown:

1. **Define Your Model:** Create an encoder that shrinks down your data and a decoder that reconstructs it back.

2. **Training:** Use labeled datasets where the original inputs are matched with outputs so your model learns effectively.

3. **Loss Function:** Minimize the difference between input and output using loss functions like Mean Squared Error.

4. **Backpropagation:** Adjust weights iteratively based on errors made during predictions.

It sounds technical, sure! But once you get into writing some code snippets—nothing too heavy-duty—you’ll see just how rewarding it feels when everything clicks together!

In short, convolutional autoencoders in PyTorch are making strides not just for tech enthusiasts but also for real-world applications across various scientific fields! So next time someone chats about deep learning and neural networks, you’ll have this cool knowledge tucked away to share! Cool stuff right?

Okay, so you know how photos on your phone take up a ton of space, right? Or how streaming a movie sometimes makes you wait while it buffers? That’s where data compression struts in like a superhero. It helps shrink files to make them easier to store or send around.

Now, let’s talk about this cool tool—PyTorch. So, PyTorch is like the Swiss Army knife for machine learning folks. It helps you build all sorts of fancy models pretty quickly. One of its tricks involves something called autoencoders, which honestly sounds more complicated than it is. Think of an autoencoder as a smart little robot that learns to squish down your data and then puff it back up again—like some kind of digital origami artist.

Imagine this scene: You’re at a family reunion, and your cousin pulls out her phone to show everyone pictures from last summer’s beach trip. There are hundreds of them! But if she had to upload all those high-resolution images without any compression? Ugh, we’d probably be there for hours just waiting for the Wi-Fi to catch up! With something like an autoencoder, those memories can be compressed—making them smaller so they zip through the internet way faster without losing much quality.

What’s super neat is that these autoencoders can learn on their own from tons of data. They can find patterns and compress your images in ways that manual methods just can’t match. It’s like they’re tuning into the music of your data and learning which notes really matter.

But don’t get too carried away with how awesome this all sounds—there are still some hurdles! Like if an autoencoder tries too hard to compress the data, it might lose some important details in the process. Ever seen blurred pictures when compressing too much? Yeah, nobody wants that!

Still, the potential is exciting! Imagine using these advancements not just for photos but also for things like improving medical imaging or speeding up AI-driven applications; you’d have better performance without bogging down systems with heavy files.

At the end of the day, innovations in data compression through tools like PyTorch aren’t merely about saving space on our devices—it opens doors to new possibilities and smoother experiences in our tech-heavy lives. And let’s face it; in this fast-paced world we live in today? That’s something worth celebrating!