So, picture this: your buddy decides to bake a cake but throws in a little bit of everything from the pantry. You’ve got flour, chocolate chips, sprinkles, and maybe even some leftover pizza toppings. Weird combo, right? But somehow, it turns out amazing! That’s kind of what Random Forests are all about in machine learning.

You see, in the world of data science, we often deal with a ton of messy information. Just like that chaotic cake batter, things can get complex fast! That’s where Random Forests come into play. They’re like having a team of expert bakers (or data trees) working together to whip up something delicious out of all that chaos.

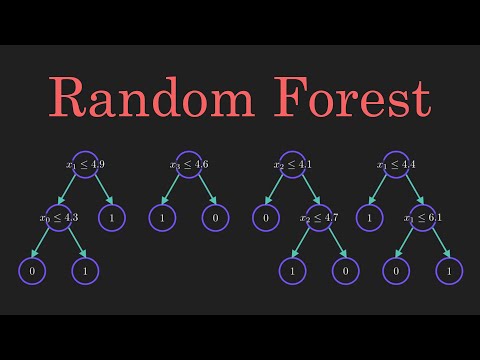

It’s wild how these algorithms take a bunch of different decision trees—think of them as mini-experts—and combine their opinions to make better predictions. I mean, who wouldn’t want that level of teamwork?

Let’s chat about how harnessing this quirky method can lead us to some seriously advanced solutions. Get ready for a fun ride through the forest—randomly speaking!

Harnessing Random Forests: Advanced Machine Learning Solutions in Scientific Research

When we talk about Random Forests, we’re diving into a super cool area of machine learning. It’s like having a whole team of decision trees working together to make sense of complex data. Imagine trying to figure out if you should pack an umbrella based on the weather forecast. One tree might focus on temperature, while another looks at humidity. All these trees come together to give you a better idea, right? That’s basically how Random Forests work.

The main idea here is that Random Forests help us deal with uncertainty. Each tree in this “forest” makes its own prediction, and then they vote on the final answer. So if most trees say it’s going to rain, you can trust that it probably will. This method helps reduce overfitting—where a model learns the training data too well and performs poorly with new data—making your predictions much more reliable.

Applications of Random Forests are everywhere in scientific research:

- Genomics: Scientists use Random Forests for classifying different genetic markers. For instance, if researchers want to identify cancer types from gene expression data, this approach can be really effective.

- Ecology: In studying biodiversity, researchers often utilize Random Forests to predict species distribution based on environmental factors. Picture mapping out where certain animals might thrive based on climate change trends.

- Meteorology: Forecasting weather patterns can be tricky, but these models can analyze historical weather data and improve predictions by taking multiple variables into account.

This ensemble learning technique shines when it comes to handling missing values too! Like, think about it—you’ve got some data points missing here and there in your dataset; Random Forests can still work their magic without skipping a beat.

A cool thing about Random Forests is their interpretability. Although they’re not as straightforward as simple models like linear regression, there are methods to visualize how important each variable is for the prediction outcome. This means researchers aren’t just getting numbers; they’re also getting insights into how factors interrelate!

I remember this one time during my college days when my buddy was struggling with his thesis on predicting plant growth based on different soil properties and sunlight exposure. He decided to try using Random Forests after I mentioned them—and boom! He not only nailed his prediction but also discovered some unexpected relationships between his variables that he hadn’t considered before.

The versatility of Random Forests makes them a go-to choice for many fields beyond science too—from finance predicting credit risk to healthcare analyzing patient outcomes. Plus, even if you’re not a coding pro or ML wizard, there are libraries out there that make implementing these models pretty straightforward.

So really, harnessing Random Forests can unlock new possibilities in research by allowing scientists to make informed decisions backed by solid statistical techniques while embracing the unpredictability of real-world data!

Advanced Machine Learning Solutions: Harnessing Random Forests for Enhanced Predictive Modeling in Scientific Research

Imagine you’re a scientist trying to understand a complex phenomenon, like predicting weather changes or identifying patterns in disease outbreaks. This is where machine learning can really shine, especially with something called Random Forests.

So, what exactly are Random Forests? Well, think of it as a group of decision trees working together. You know how sometimes you might ask a few friends for their opinion before making a decision? That’s kind of what happens here. Each tree makes its own guess based on data it gets, and then they all vote on the best answer! This helps reduce errors and create a more reliable prediction.

The beauty of Random Forests lies in their power to handle large sets of data with many variables—like species distribution in ecology or genetic markers in medicine. It’s super flexible, which means scientists can apply it to various fields.

- Flexibility: You can use Random Forests for both classification (like deciding if an email is spam) and regression (like predicting house prices).

- Robustness: Because they combine results from many trees, Random Forests are less likely to overfit the data—which means they make better predictions on new data rather than just memorizing the old stuff.

- Feature Importance: They can tell you which variables are most important, kind of like figuring out what ingredients make your grandma’s recipe taste so good!

I remember when I was working on a project that involved analyzing environmental data. Each tree I trained represented different factors affecting wildlife populations. Once we aggregated their votes—surprise!—we got insights into survival rates that were way sharper than expected.

You might be wondering about the downsides too. Sure, they’re great but can also be slow and memory-intensive if you have tons of trees or features. It’s like cooking up a huge feast; delicious but takes time and resources!

The key to harnessing Random Forests effectively is tuning them right: selecting how many trees to use and how deep they should be. Too few trees might miss important details while too many could bog things down unnecessarily.

In scientific research, using these models allows researchers not only to predict outcomes but also helps them find hidden patterns in data that may lead to new discoveries! Imagine discovering a new treatment for cancer because some little feature jumped out at you thanks to those clever forest algorithms.

Overall, embracing advanced machine learning solutions like Random Forests could propel scientific research into exciting new directions! The collaboration between humans and machines continues evolving—and honestly? It’s pretty thrilling!

Exploring the Random Forest Algorithm: A Comprehensive Guide to Its Applications in Scientific Research

So, let’s talk about the Random Forest algorithm. You might be wondering what it actually is. Well, imagine you’re trying to make a decision and you ask a bunch of friends for their opinions. Each friend gives a slightly different answer because they all see things from their own perspective. When you take the majority vote, you often end up with a pretty solid choice, right? That’s basically how Random Forest works—only it’s a little more complex and way cooler!

At its core, Random Forest is a type of machine learning algorithm. It comes under the umbrella of “ensemble methods,” which means it combines multiple models to improve accuracy. More specifically, it builds a whole bunch of decision trees (like those friend opinions we just talked about) during training time and merges them to get an overall prediction. Each tree is built on random subsets of data and features. This randomness is what helps avoid overfitting—when your model learns too much from the training data.

But what does this mean for scientific research? Oh man, the applications are pretty wide-ranging! Here are some key points on how researchers harness this algorithm:

- Biology: In genomics, Random Forest can help identify gene interactions or classify different tumor types based on genetic data. This could lead to better-targeted therapies for patients.

- Agriculture: Farmers can use it to predict crop yields based on historical data and environmental factors like weather conditions or soil quality.

- Environmental Science: It’s used in classifying land cover types from satellite images or predicting species distributions in response to climate change.

- Health Sciences: Researchers analyze patient data to predict disease outbreaks or patient outcomes based on various indicators.

These examples really show how diverse its applications can be!

The magic actually happens during the model-building process. The trees are grown by making splits in the data based on different features—sort of like asking each tree what they think matters most. Each split aims to reduce uncertainty (or impurity) in predictions.

You know that feeling when you try something new and it doesn’t go as planned? Well, that’s where **cross-validation** saves the day! This technique checks how well our model performs on unseen data by splitting the dataset into smaller samples multiple times. It helps ensure our predictions aren’t just random luck.

Now, let’s touch on some advantages of using Random Forest:

- No need for feature scaling: Unlike many algorithms, you don’t need to worry about normalizing your features before using it!

- Error handling: It’s good at handling missing values; if some data points are lost during experiments, it can still give decent results!

- Easily interpretable: While it’s more complex than linear models, you can still understand which features matter most by looking at feature importance scores.

Of course, nothing’s perfect! There are downsides too. The model can get quite heavy, which may not be ideal for real-time predictions or very large datasets—it may take some time to churn out results!

In wrapping things up, using Random Forest isn’t just about throwing numbers around—it’s really about making sense of complex relationships in your data through collaboration among many decision trees. Each tree has its own little bit of wisdom!

So next time you’re knee-deep in data analysis for your research project or curious about machine learning’s role in science today, remember this handy tool—it might offer exactly what you need!

So, let’s chat about this interesting thing called Random Forests. You know, sometimes when you think of forests, you might picture a peaceful place filled with trees and chirping birds, right? Well, in the realm of machine learning, “forests” refers to something a bit more complex—like a collection of decision trees working together.

Now, decision trees are pretty cool on their own. Imagine asking a bunch of yes-or-no questions to decide what kind of pizza you wanna order. “Is it vegetarian?” “Does it have pepperoni?” Each question leads you down a path until—boom!—you’ve figured out your ideal slice. The catch is that one tree might not always get it right because it can easily overthink or get confused by noise in the data.

That’s where the magic of Random Forests comes in! Basically, they take multiple decision trees and combine their strengths. It’s like asking a group of friends their opinion instead of just one person—more heads are better than one, right? By aggregating the predictions from many trees, Random Forests help reduce errors and improve accuracy.

Here’s an anecdote for ya: I once tried to figure out what movie to watch on a Friday night. I asked my friends for recommendations separately but got totally different suggestions! One said action, another wanted romance—it was chaos! But when we all sat down together and discussed our tastes, we ended up picking something everyone enjoyed. That collaboration and diversity in input really made a difference—kinda like how Random Forests work!

Using these techniques can supercharge machine learning solutions across various fields. From predicting stock prices to diagnosing diseases based on symptoms—it’s all about harnessing that collective wisdom hidden within those data trees.

But there’s also this deeper layer here that gets me thinking: randomness isn’t just chaos; it can lead to richness and variety in solutions. It’s like life—you can plan all you want but sometimes those unexpected turns lead to the most exciting adventures.

In short, Random Forests remind us that collaboration can conquer complexity and that embracing randomness might just yield brilliant insights we never saw coming! So next time you’re facing a tough decision or diving into data analysis, remember: sometimes it’s best to gather diverse voices—or trees—and let them work together for the best outcome!