You know what’s funny? In college, I once took a stats class that had us all convinced that you needed a crystal ball to understand data. Seriously! We were knee-deep in numbers, and I couldn’t help but wonder: how do researchers know if their results actually mean something?

Well, a big player in that game is post hoc power analysis. Sounds fancy, huh? But basically, it’s like looking back at your party pictures to see if anyone actually had fun. You got all this data, but did you have enough oomph behind your study to really make sense of it?

So let’s break it down together. I promise you won’t need any calculators or wizardry here. Just hang tight and let’s figure out why this concept is key in scientific research methods. It’ll be way more fun than you think!

Understanding Post Hoc Power Analysis in Scientific Research: Applications and Importance

Alright, let’s break down this whole idea of post hoc power analysis in scientific research. It sounds pretty intense, but it’s actually super interesting once you get the hang of it! Basically, this type of analysis happens after a study is done. You know when you’ve finished an exam and then you’re like, “Did I study enough?” That’s kind of what post hoc power analysis is all about.

In research, the idea behind power analysis is all about understanding how likely your study is to find a significant effect if there really is one out there. It focuses on something called statistical power, which basically means the probability that your test will correctly reject the null hypothesis—that’s just fancy talk for saying your test will tell you something meaningful if it’s true.

Now let’s get into some details.

- Why do we do it? Well, often researchers want to make sure their sample size was big enough to detect an effect. If the sample size was too small, there might have been a real effect hiding away that we just didn’t see because we didn’t have enough data!

- The timing matters. Post hoc power analysis comes after you collect your data and run your tests. This makes it different from pre-study power analysis, which happens before any data collection begins—much like packing for a trip!

- No crystal ball here! It can’t change what has already happened in the study; it just gives you insight into whether your initial findings were reliable or not.

You might be thinking: “But isn’t this just second-guessing my work?” Well, sort of! Let me tell you an anecdote that might help clear things up. A friend of mine did a really cool study on how plants react to different types of light. After crunching the numbers, they found no significant difference between two groups. A post hoc power analysis later showed that their sample size was so small that even if something interesting was happening, they probably wouldn’t have detected it. So instead of shrugging off their results as a total flop, they learned they had to gather more data next time—kind of a bummer but crucial for better science!

Now onto some more key points:

- Limitations exist! Post hoc power analyses can sometimes give false reassurance. Just because you didn’t find significance doesn’t mean there wasn’t anything going on—just like finding out there are no cookies left after you’ve craved one all day!

- It’s not always necessary. Some researchers argue it can be misleading or not useful at all if used blindly without context.

The bottom line? Post hoc power analysis can be a handy tool in scientific research—it shines some light on what went down during your study and helps shape future efforts. Just remember it’s part of the bigger picture in understanding research findings! So next time you’re pondering over those pesky stats after finishing up with an experiment, keep post hoc power analysis in mind; it’s like having an extra pair of glasses to see things clearly.

Understanding the Differences: Is Post Hoc Analysis Equivalent to ANOVA in Scientific Research?

So, let’s talk about something that can feel a bit like a tangled web—post hoc analysis and its relationship with ANOVA. You know, when we’re digging into scientific research, these terms pop up quite often. But are they the same thing? Not quite! Let’s break it down.

First off, ANOVA stands for Analysis of Variance. It’s a statistical method used to compare means across multiple groups. Imagine you’re testing how different fertilizers affect plant growth across three groups of plants. ANOVA helps you see if there’s a significant difference in growth rates between the groups. Pretty cool, right?

Now, post hoc analysis comes into play *after* you’ve run your ANOVA. Say your ANOVA results showed that at least one group was different from the others. To figure out *which* groups are different, you’d use post hoc tests. It’s like following up after a big revelation—confirming the details so everything makes sense!

Here’s where things can get tricky though: post hoc power analysis. This is a bit of a different ballgame. Power analysis itself is about understanding whether your sample size is big enough to detect an effect if there is one. If your study doesn’t have enough power, you might end up with misleading results—like saying there’s no difference when there actually is.

For example, imagine you’re studying two teaching methods and find no significant difference using ANOVA because your sample size was too small. A post hoc power analysis would help determine if the lack of differences was due to insufficient data rather than actually no differences existing at all.

Let’s sum this up with some key points:

- ANOVA compares means across multiple groups.

- Post hoc tests identify which specific groups differ after an ANOVA shows significance.

- Post hoc power analysis assesses whether your study had enough participants to make reliable conclusions.

So no, they’re not interchangeable! They serve different purposes in research but work together nicely to give us clearer insights into our data.

In closing, it’s like putting together pieces of a puzzle—you want to make sure each piece fits perfectly for the whole picture to emerge clearly. There’s real artistry in statistics when we understand how these concepts connect and support one another in research!

Mastering Post Hoc Power Analysis: A Step-by-Step Guide for Scientists

Let’s chat about post hoc power analysis. Sounds fancy, huh? But it’s really just a way to figure out how likely your study is to pick up on effects that are truly there. It’s like checking if you brought enough snacks for your friends at a party—if you didn’t, they might go hungry, and no one wants that!

First off, let’s break down what this means. When researchers run an experiment, they often want to know if their findings are significant or just due to random chance. This is where power analysis comes in. It helps determine the minimum sample size needed to detect an effect if one exists. Now, the term post hoc means “after the fact.” So, post hoc power analysis is done after the study has been completed.

You might be thinking, “Why do it after?” Well, here’s the thing: sometimes researchers finish their studies and realize they didn’t gather enough data or that their results weren’t as convincing as they hoped. By doing a post hoc power analysis, they can find out how likely it was for their experiment to detect an actual effect given the sample size and effect size.

Let’s say you conducted a study on how many hours of sleep impact test scores among students. You had 50 students but found no significant difference in scores between those who slept 8 hours and those who slept 6 hours. A post hoc power analysis would help you figure out if having only 50 students was enough to detect any real differences in performance—if there were any at all.

Here’s how you can approach this:

- Gather Your Data: Use the results of your study—like means, standard deviations, and sample sizes.

- Determine Effect Size: This is basically how strong or meaningful your findings are. You can calculate it based on your data.

- Select Significance Level: Typically set at 0.05 (which means you’re okay with a 5% chance of saying there’s an effect when there isn’t).

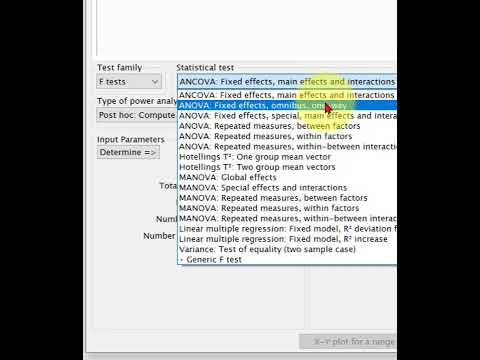

- Run Power Analysis Software: There are various tools available online that allow you to plug in your data and get results.

- Interpret Results: Finally, look at what the analysis shows about your power level—higher values mean better chances of detecting true effects.

It’s important to keep in mind that while this process is helpful for understanding past studies, it’s not always perfect or definitive. Sometimes researchers might conclude everything looks good statistically but forget that context matters too!

Some folks might argue that focusing too much on post hoc analyses could lead us astray since they’re based on existing data rather than planning from scratch. And that’s fair! But knowing what went wrong (or right) and adjusting future studies accordingly is super valuable.

So next time you finish analyzing some data and feel unsure about those non-significant results, don’t sweat it too much! Just consider rolling up those sleeves for a little post hoc power analysis action—it might just shed some light on what’s really going on under the surface of those numbers!

Alright, so post hoc power analysis can feel like one of those fancy terms that make you scratch your head. But if you dig a bit deeper, it’s kinda interesting and important in scientific research.

Imagine you’re at a party with a bunch of friends, and everyone starts arguing about who can throw a ball the farthest. You decide to see who’s right by tossing the ball yourself. But later on, someone mentions that maybe the test wasn’t fair because not everyone got to try it out. That’s kind of like what happens in research when we look back at our experiments after they’ve been done—like, did we really have enough “oomph” in our study to find something significant?

Here’s the deal: post hoc power analysis is about figuring out if your study had enough statistical power to detect an effect if there was one. It sounds super technical, but think of it this way: if you don’t have enough people (or samples) in your study, you might miss finding a cool result just because you didn’t have enough data. So, researchers sometimes look back after their results come in and do this analysis to see if they might’ve caught something special—if only they’d had more participants or better methods.

But wait! Here’s where it can get tricky. Some folks argue that doing this kind of analysis after the fact doesn’t really mean much because you’re trying to interpret results based on what already happened—not exactly ideal. It’s like trying to change the rules of your own game after you’ve seen how it turned out.

When I think about all this, I remember being in group projects at school where some members didn’t pull their weight, and we ended up with less than stellar results. Later on, we’d sit around and talk about how things could’ve been different if only we had made some changes at the start. It brings home the point that while reflecting on past analyses is crucial for understanding our work better, it also highlights how important it is to plan carefully beforehand.

In science as much as in life, hindsight often has 20/20 vision—or whatever they say! And while post hoc power analyses offer some insights into what might have gone wrong or right when interpreting data after everything’s said and done, it reminds us just how important planning and hypothesis testing are before jumping into experiments. So next time you read a research paper discussing power analysis, just know there’s more than meets the eye behind those numbers!