Imagine this: you’re at a dinner party, casually chatting with a friend. Suddenly, they mention neural networks, and you just nod along, pretending to understand. But inside, you’re like, “What even is that?”

Neural networks can seem super complicated. They’re like the brainy cousins of regular computer programs. But the thing is, they can do some seriously cool stuff.

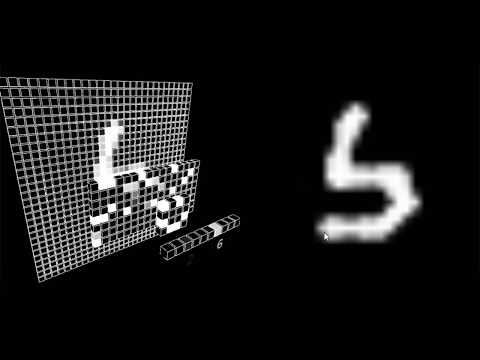

So, how do we make these digital brains more relatable? Well, visualizing them is a great start! Think of it as turning a tricky recipe into a colorful infographic — way less daunting!

In this little journey, we’ll explore how these visuals can make understanding neural networks more engaging and fun. Ready to take a peek behind the curtain of AI? Let’s go!

Evaluating the Superiority of Graph Neural Networks Over Convolutional Neural Networks in Scientific Applications

So, let’s break this down. You’ve probably heard of Convolutional Neural Networks (CNNs), right? They’re super popular in the realm of image processing. But there’s a new kid on the block: Graph Neural Networks (GNNs). And honestly, they’re shaking things up, especially in scientific applications. Seriously, it’s like comparing apples and oranges sometimes.

CNNs are designed to handle grid-like data structures, like pixels in an image. They analyze data through layers of convolutions—basically sliding filters over images to detect patterns. So, if you’ve got a bunch of photos, CNNs are great at identifying cats vs dogs or even distinguishing between different types of medical scans.

But here’s where it gets interesting: GNNs work on graphs, which is all about relationships and connections—like social networks or molecular structures. Instead of just looking at individual pieces of data in isolation, GNNs consider how these pieces interact with one another. You see what I mean? It’s a whole different way of thinking.

Now imagine you’re studying proteins in biology. A protein’s function doesn’t just depend on its structure; it also depends on how it interacts with other proteins and molecules. So using GNNs allows researchers to model these complex interactions better than CNNs ever could.

- Scalability: GNNs can handle large datasets more efficiently because they focus on connections rather than sheer data volume.

- Diverse Applications: From predicting chemical reactions to understanding brain connectivity patterns—GNNs really shine.

- Sparsity Handling: Many real-world problems present sparse data relationships that GNNs can manage well.

Let me give you a personal story here. A friend of mine works in drug discovery—a field that relies heavily on understanding complex biological networks. He was telling me how much easier his life became when they started implementing GNNs instead of sticking with traditional methods. The insights they gained from analyzing those intricate connections between molecules were game-changing!

However, it’s not all sunshine and rainbows for GNNs either; they’re still developing and can be computationally intense sometimes compared to CNNs—which are a bit more straightforward when it comes to implementation.

In summary, while CNNs have their place in the spotlight for visual tasks like image recognition or classification, GNNs step in where relationships matter most—especially within scientific contexts where understanding interconnections is crucial. It’s pretty rad how technology keeps evolving to tackle new challenges!

Exploring ChatGPT: Understanding Its Neural Network Architecture in Scientific Context

So, let’s talk about ChatGPT. It’s basically a super smart computer program that can understand and generate text like a human would. What’s behind this? Well, it’s all about neural networks. Think of them as digital brains that learn by looking at tons of information.

Neural networks mimic how our own brains work, using layers of interconnected nodes—kind of like neurons. Each of these nodes takes in some data, processes it, and then sends its output to the next layer. Here’s what you need to know:

- Layers: There are different layers in these networks. The first layer takes raw input (like the words you type), while hidden layers process this info to help the network understand context and meaning.

- Weights: Each connection (or synapse) between nodes has weights that determine how strong the signal is. During training, the network adjusts these weights based on feedback—kinda like learning from mistakes.

- Activation functions: These are mathematical equations that help decide if a node should “fire” or not. They introduce non-linearity into the model, enabling it to learn complex patterns.

Now, it’s important to visualize this stuff when you’re trying to engage with scientific ideas. Let me share something personal here: I remember my first time seeing a diagram of a neural network—it blew my mind! I could finally see how information travels through intertwined paths, making complex decisions step by step.

In terms of practical use, visualizing neural networks helps researchers and enthusiasts understand what happens inside these models without needing crazy technical jargon. If you can picture how data flows through different layers and how each component interacts with one another, suddenly things make sense.

For instance, imagine you’re sorting fruits by color using an assembly line. The first part might recognize colors (like red or yellow), while the next identifies shapes (round pears versus long bananas). This is pretty much how a neural network operates—it pieces together bits of information until it can come up with an answer.

So when we look at ChatGPT’s architecture through this lens, we see how it’s designed for tasks like writing or answering questions by processing language through multiple layers until it generates coherent responses.

Understanding these concepts creates a bridge between complicated science and everyday experiences. You start realizing that all those nerdy technical details translate into something tangible—a chat with a virtual assistant or even deeper interactions with your tech devices!

Wrapping things up here: exploring ChatGPT’s structure offers insights into how technology mimics human thought processes while remaining distinctively artificial in its functioning. Seeing neural networks visually puts some realness behind what could otherwise feel abstract and distant! Isn’t that pretty neat?

Exploring AlphaFold: Understanding Its Relationship with Graph Neural Networks in Scientific Research

Alright, let’s chat about AlphaFold, shall we? This is a pretty exciting area in the world of scientific research! AlphaFold is like this super-smart program developed by DeepMind that can predict protein structures really well. Proteins are these tiny machines in our bodies, doing all sorts of important jobs, and knowing their shapes is key to understanding how they work.

So what’s the deal with Graph Neural Networks? Well, basically, they are a type of artificial intelligence that’s really good at working with data that has a structure—like proteins. Imagine you have a bunch of points (like atoms) connected by lines (bonds). That’s where graph neural networks shine. They can analyze these connections and learn from them efficiently. This technique helps AlphaFold predict how proteins fold into their functional shapes.

Let’s break that down a bit more. When you think of proteins, picture them as intricate origami figures made from long strings of paper (the amino acids). The way you fold them determines what they can do. Graph neural networks help AlphaFold understand these folds by considering how each amino acid interacts with its neighbors—sort of like figuring out which corner to bend first to make your origami swan look right!

Here’s how it connects:

- Data Representation: Instead of treating protein data as flat sequences, graph neural networks view it as a connected network! So each amino acid has relationships with others based on their chemical properties.

- Learning Relationships: These networks are great at learning the complex relationships between different parts of the protein structure. They recognize patterns in how certain amino acids interact based on their locations.

- Accurate Predictions: This results in incredibly accurate predictions about the protein’s 3D shape—something that’s crucial for designing drugs or understanding diseases.

I remember when I first learned about this technology; it felt like magic! Just imagine: scientists have spent decades figuring out protein structures using tedious lab methods, and now we have this tool that can do it almost instantaneously.

But how does AlphaFold actually work? Well, it’s trained on tons and tons of known protein structures from databases. It learns from these existing examples and uses that knowledge to predict new ones. It’s kind of like learning to play an instrument: you practice with real songs until you can create your own music.

In practical terms, scientists can use AlphaFold not only to understand diseases better but also to discover new treatments faster! With the world facing health challenges like pandemics or drug-resistant bacteria, having tools like this could be game-changing.

So yeah, AlphaFold and graph neural networks are reshaping the landscape of biological research. It’s all interconnected—you’ve got data representation, learning capabilities, and accurate predictions coming together beautifully! How thrilling is it to watch science evolve so rapidly?

You know, there’s this incredible thing happening at the intersection of science and art when we talk about visualizing neural networks. It’s like taking something super complex and kind of abstract, and turning it into a beautiful image or animation that we can all relate to. I mean, just think about it! Neural networks are these crazy intricate systems mimicking how our brains work. But when you visualize them, they transform from a jumble of numbers and code into something tangible.

I still remember the first time I saw a 3D model of a neural network. I was at this small science fair, just wandering around, feeling a bit lost among the robotics displays and chemistry experiments. Then I stumbled upon this booth with a screen showing colorful patterns pulsing as if they were alive! The presenter explained how each color represented different neurons firing in response to input data. My jaw dropped. Suddenly, I wasn’t just listening; I could see the complexity in action! It was like opening a door to a new universe.

Visualizations like that can spark curiosity in people who might not even know what neural networks are or why they matter. And that’s pretty awesome! By putting these concepts into images or animations, we make science more accessible—like you’re not just reading some dry textbook but instead engaging with something vibrant and dynamic.

But then again, it’s about balance, right? You can’t go too far down the rabbit hole where the visuals become more distracting than informative. It’s important to keep those visuals grounded in reality, showing how these networks actually learn and adapt rather than merely looking cool for the sake of it.

So, basically, visualizing neural networks is about bridging gaps—connecting hardcore science with everyday people through art and imagery. It invites us all on this journey to understand not only how our minds might work but how machines can begin to mimic that in their own funky ways. Pretty mind-blowing if you ask me!