So, imagine this: you’re at a party, and someone drops the bomb about how many ways to analyze data. Sounds kinda boring, right? But hang on! You know that moment when you’re trying to decide if that new restaurant really is better than your favorite spot? That’s where something like the Wald Test kicks in.

It’s like having a secret weapon for figuring out if your hunch is actually true or just wishful thinking. Seriously! You might be surprised how often we use these kinds of tests without even realizing it.

Let’s get into what the Wald Test is all about. Don’t worry; I promise to keep it casual and maybe toss in some fun stuff along the way. It’ll be a lot more interesting than you’d think!

Examining the Wald Test: Assumptions of Normality in Statistical Analysis

The Wald test is a statistical tool that’s used to assess the significance of individual predictors in a model. You might encounter it especially in regression analysis. It helps you figure out if those predictors are making a meaningful contribution to your model. But let’s talk more about what goes on under the hood, especially regarding normality.

First off, when using the Wald test, there are some important assumptions that need to be met for the results to be valid. One of these key assumptions is that the estimators being evaluated follow a normal distribution. So, why does this matter? Well, if your data isn’t normally distributed, it can skew your test results and lead you to some not-so-great conclusions.

Now, here’s the thing: if you’re working with large sample sizes, thanks to the Central Limit Theorem, you’re usually in the clear. This theorem says that as you increase your sample size, the distribution of the sample means approaches normality—no matter what shape your original data was.

But small sample sizes? That’s where trouble can brew. Imagine you’re analyzing a dataset from a small group of friends who only play one sport together. If their scores are super uneven—like one person always winning—the data may not be normal at all. If you run a Wald test on this wonky data, it’s likely gonna give you misleading results.

Let’s get technical for just a second: when we calculate test statistics in the Wald test (think about it like determining how far off an estimate is from what we expect), we rely on standard errors derived from that normal assumption. If our estimate is at least normally distributed—as it should be—we’re golden! But those assumptions being violated? Yeah, that’s where things start getting dicey.

To keep up our trusty analysis, researchers sometimes do transformations on their data before running tests like these. For example:

- Log transformation: Let’s say you’re dealing with income data that’s heavily right-skewed—transforming those figures could help make them more normal.

- Square root transformation: This could work wonders for count data; maybe if you’re counting how many fruits each friend brings to a picnic.

These transformations can help meet those pesky assumptions and yield more reliable results when running your analysis.

Another thing worth mentioning is checking for outliers or extreme values that could mess with normality. Imagine hunting for buried treasure (you know you’ve done it). If one friend shows up with boatloads of gold coins while everyone else has just a few bucks—yeah, that friend’s score might throw off everything between you guys!

In essence, always take time to validate those assumptions before running statistical tests like the Wald test. It’s all about ensuring your findings stand strong and don’t topple over because of some funky distributions or rogue outliers.

So next time you’re using this test or advising someone else about it: remember to keep an eye on those important assumptions! They’ll help ensure what comes out at the end actually makes sense in real life—and that’s what we’re really after!

Understanding the P-Value of the Wald Test Statistic in Statistical Analysis

So, let’s chat about the P-value in the context of the Wald Test statistic. But bear with me; it’s not as dry as it sounds!

First off, the Wald Test is like a little detective in statistics. It helps you figure out if your model’s parameters are significantly different from zero. This basically tells you if the things you’re measuring actually matter.

Now, onto the P-value. Think of it as a yes-or-no answer to a question: “Is there enough evidence to say my findings aren’t due to random chance?” The lower the P-value, the stronger your evidence against that pesky null hypothesis (which is essentially saying there’s no effect or no difference).

Here’s where the magic happens: when you run a Wald Test, you calculate this statistic based on how much your estimated parameter differs from what you’d expect under that null hypothesis. Then, you get this number—your Wald statistic—that tells you how far away from zero your parameter is.

Next up, here’s how we tie that into our P-value. You take your Wald statistic and look at it in relation to a statistical distribution (like a bell curve—remember those?). The way we see it is: if your parameter is really just random noise, then most of your Wald statistics will be pretty close to zero. But if it’s significant, boom! Your P-value will show something small—like less than 0.05 or 0.01.

But don’t forget: it’s not just about the number. A small P-value doesn’t mean your findings are practically important; they’re just statistically significant. You’ve got to consider effect sizes and real-world implications too! Sometimes researchers hear “statistically significant” and think they found gold when really they’ve dug up some gravel.

Let’s say you’re testing a new medicine versus a placebo using this test. If your Wald test gives you a P-value of 0.03, then congrats! You have strong evidence that this medicine does something special compared to doing nothing at all (the placebo). But always ask—does that small number make enough difference in people’s lives?

Finally, remember that P-values can be misinterpreted. They don’t measure the probability that either hypothesis is true; they just tell us how well our data supports one over another based on our sample size and variation.

In summary:

- The Wald Test helps determine significance based on model parameters.

- A low P-value suggests strong evidence against the null hypothesis.

- P-values need context; they don’t guarantee practical importance.

So next time someone throws around those numbers like confetti at a parade, you’ll know exactly what they’re talking about!

Understanding the Wald Test: Assessing Statistical Significance and Research Outcomes in Scientific Studies

So, let’s chat about the Wald Test. You might be wondering, like, what’s the deal with this statistical test? Well, the Wald Test is all about checking if certain parameters in a statistical model are significant or not. It helps researchers figure out if their findings are really telling them something important, or if they’re just a fluke.

Basically, the Wald Test looks at the ratio of an estimated parameter to its standard error. You follow me? If that ratio is big enough, it suggests that your parameter is statistically significant. It’s kind of like a litmus test for research outcomes!

But there’s more to it! Here are some key points to consider when using the Wald Test:

- Parameter Estimation: First things first, you need an estimate for your parameters. This often comes from regression analysis.

- Standard Errors: The test also requires standard errors of those estimates. Standard errors tell us how much we should expect our estimates to vary.

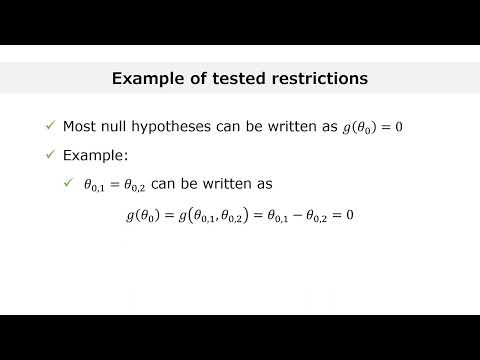

- Testing Hypothesis: The main goal here is to test a null hypothesis—usually that a parameter equals zero (no effect)—against an alternative hypothesis.

- Distribution: Under normal conditions, this ratio follows a chi-squared distribution, which helps in determining how likely it is that we observe such a value under the null hypothesis.

Here’s an example: imagine you’re studying whether a new teaching method affects student performance. After gathering data and crunching some numbers, you find your estimated effect size (like, how much it improves scores) and its standard error. You plug these into the Wald Test formula and bam! You get a statistic that tells you if this teaching method really works or not.

Another cool thing about the Wald Test? It can be applied in various contexts—like logistic regression and other generalized linear models. But don’t get too comfortable! Sometimes it doesn’t perform well with smaller samples or in complex models; that’s when researchers have to be careful about relying solely on it.

Also, keep in mind that while significant results from the Wald Test might look impressive—like finding out your new method boosts scores—they don’t always mean practical importance. Just because something’s statistically significant doesn’t mean it’s gonna change lives or classrooms overnight.

In short, understanding the Wald Test can give you great insight into whether your research outcomes stand on solid ground. So next time you’re sifting through data or considering some new teaching trick, remember this nifty little test lurking behind those equations!

Alright, let’s talk about the Wald test. It might sound like some fancy math thing, but stick with me. I remember sitting in a statistics class, our professor threw out terms like “Wald test” and “statistical significance,” and we all kind of exchanged confused glances, you know? It felt like we were suddenly in a different universe where numbers ruled everything.

So, what’s the deal with the Wald test? Well, at its core, it’s a method to help researchers figure out if their results mean anything or if they’re just random noise. Imagine you’re flipping a coin. If it lands heads up like eight times in a row—cool! But hold on; we gotta ask: is that normal? Or is this coin magic?

That’s where this test comes into play. It checks whether the effect you’re seeing in your data is strong enough to be considered significant. Basically, it helps answer that burning question: “Is this real?” By looking at the estimate of an effect and how spread out your data is around that estimate, it tells you if your findings are likely due to chance or if there’s some real underlying pattern.

But here’s the kicker—while this test can be really handy, it’s not foolproof. For example, sometimes researchers can get overly excited about their results. They might declare something significant when it’s just a fluke because other factors weren’t properly controlled for. It’s kind of like saying you’re an amazing cook just because one dinner turned out great; maybe it was just luck!

There was this one time I was working on a project analyzing survey data for my friends’ startup idea. We used the Wald test to assess how strongly different factors influenced customer interest. But honestly? Seeing those numbers at first made me feel both anxious and thrilled—like trying to read tea leaves! It reminded me that behind every statistic are human stories and decisions.

So when you’re diving into research and using tests like these, remember they’re tools—not definitive answers on their own. They give you guidance but can’t replace good judgment and context about what you’re studying. Statistical significance is important, but don’t lose sight of the bigger picture—you know? An interesting finding could lead down paths you never expected!

In summary, even as these statistical methods evolve and get more complex over time (I mean who even knew stats could be so intricate?), they still remind us that science is as much about asking questions as it is about crunching numbers!