Have you ever tried to figure out why some people rave about pineapple on pizza while others gag at the thought? It’s like, what gives? Well, there’s actually some science behind that sort of stuff.

So, picture this: you’ve collected a bunch of data that tells you something interesting about your favorite hobby, maybe running or baking. But now you’re stuck. You’ve got so many numbers and charts swimming around in your head, and honestly, translating that into something meaningful feels like climbing a mountain in flip-flops.

That’s where ANOVA comes in—kind of like the superhero of statistical analysis! It helps you make sense of all those numbers by comparing different groups in your data. It’s as if someone said, “Hey! Let’s see if there’s really a difference between folks who love pineapple pizza and those who think it’s an abomination!”

Stick with me for a bit; we’re going to break down these ANOVA techniques together. You’ll be impressing your friends with crazy stats in no time!

Understanding ANOVA Techniques: A Comprehensive Guide for Scientific Research

Alright, let’s chat about ANOVA. It’s a fancy acronym for Analysis of Variance. This technique is like a super-powered magnifying glass that helps scientists understand if there are any significant differences between groups in their data. So, if you’re looking into how different fertilizers affect plant growth, ANOVA can help you figure out if one fertilizer really works better than another or if the differences you see are just random chance.

To break it down a bit more, here’s how it all works. You start off with a question—like, does watering plants with salt water make them grow taller? You’d set up an experiment with some plants getting salt water and others getting plain water. The idea is that after some time, you measure their heights.

Here’s where the magic of ANOVA comes in:

- One-way ANOVA: This is the simplest form. It compares means across one factor. So, if you had three groups of plants—each getting a different type of fertilizer—you’d see if there’s a significant difference in height among those groups.

- Two-way ANOVA: This one’s cooler because it looks at two factors at once! Imagine you’ve got fertilizer and light conditions as your two factors. You can see how each affects growth and whether their interaction makes a difference.

- Assumptions: Before diving into analysis, there are some ground rules. Your data should be normally distributed (like a bell curve) and have similar variances across groups. If not, results might be skewed.

- Post-hoc tests: If your ANOVA finds significant differences, you’re still not done! You need to figure out which specific groups differ from each other through post-hoc tests—think of this as the follow-up questioning to narrow down the culprits.

Now, let me share something relatable here. A friend of mine was working on their thesis involving plant growth too. They wanted to test several types of soil mixes but got overwhelmed by all the potential combinations and outcomes. Using ANOVA helped them simplify things tremendously! It turned their messy data into clear insights and made discussions with their supervisor way easier.

And hey, don’t forget about software! Most researchers use statistical programs like R or SPSS to run these analyses because they do all the heavy lifting for you once you punch in your data.

Moreover, interpreting results is key too! If your p-value (another nerdy term) is less than 0.05—that’s usually taken as a sign that something interesting is happening; there’s likely a big difference somewhere.

So yeah, understanding ANOVA allows researchers to sort through the noise and find meaningful differences in their experiments without getting lost in complicated numbers or endless graphs. It’s like finding clarity amidst chaos!

Just remember: while it’s powerful, it ain’t magic! Careful planning and good experimental design are still essential to get reliable results that mean something in the real world.

Exploring the Four Types of ANOVA: A Comprehensive Guide for Scientific Research

So, let’s chat about ANOVA, which stands for Analysis of Variance. It’s a method you might use if you’re trying to figure out whether there are any statistically significant differences between the means of three or more groups. Now, there are actually four main types of ANOVA that researchers use, and each one has its own little quirks. You with me? Let’s break it down.

1. One-Way ANOVA

This is your go-to when you want to compare the means of three or more independent groups based on a single factor. Think about testing different fertilizers on plant growth; you’d have one factor (the type of fertilizer) and multiple groups (like A, B, C). If the results show significant differences among these groups, then at least one fertilizer is working better than the others.

2. Two-Way ANOVA

Now, if you want to throw in another factor into the mix, this is where the Two-Way ANOVA comes in handy. Imagine you’re looking at how both fertilizer type and watering frequency affect plant growth. You could have different groups for both factors: Fertilizer A with daily watering, Fertilizer B with weekly watering, etc. And yes, it also tests interactions between these two factors! Sometimes those interactions can surprise you!

3. Repeated Measures ANOVA

This one’s for when you’re measuring the same group multiple times under different conditions or over time. For instance, if you were measuring blood pressure in a group of patients before treatment and then again after one month and three months—it helps account for variability within the same subjects instead of comparing completely different groups.

4. Mixed-Design ANOVA

If you’re feeling adventurous and want to combine independent and repeated measures in a single study? Then Mixed-Design ANOVA is your answer! It lets you examine effects for both types of data! Let’s say you’re studying how medication impacts anxiety levels over several weeks (repeated measures) across different age groups (independent measure). How cool is that?

The common thread here? Each type helps researchers draw conclusions from data while considering variability among samples! And trust me; it’s super important to choose the right one based on your research design.

When using any form of ANOVA, remember that you’ll typically test hypotheses to see if at least one group mean differs from others—this often involves setting a significance level (like p

In summary, whether it’s One-Way, Two-Way, Repeated Measures, or Mixed-Design, choosing the right type of ANOVA is crucial in scientific research—it’s like picking the right tool for a job! So keep experimenting with your data analysis—you never know what insights might pop up!

When to Use ANOVA vs. Z Test: A Comprehensive Guide for Scientific Research

Alright, so let’s break down the differences between ANOVA and the Z test. These are tools you’ll come across when you’re diving into statistical analysis in research.

So, first off, what’s a **Z test**? Well, it’s a statistical method used to determine whether there’s a significant difference between the means of two groups when you know the population variance. Imagine you’re comparing average heights of two different plant species under controlled conditions. You’d use a Z test if you have enough data points and if you know the variances.

Now let’s talk about **ANOVA**, which stands for “Analysis of Variance.” This technique is used when you’re looking at more than two groups. Say you want to compare three or four types of fertilizer and their effects on plant growth. Using ANOVA would help determine if there are any significant differences among those groups.

Here’s where it gets interesting:

- When to Use a Z Test: If you’re comparing two groups only and have enough data—at least 30 samples each for that sweet normal distribution.

- When to Use ANOVA: If you’ve got three or more groups, like comparing different teaching methods on student performance.

- Variances: The Z test assumes known population variances while ANOVA looks at sample variances across groups.

Let’s not forget about the assumptions these tests make! With a Z test, your samples should ideally follow a normal distribution—like getting a perfect bell curve when plotting your data. But with ANOVA, it assumes that your samples also come from populations with equal variances. This is called homogeneity.

Here’s an anecdote—the other day, I was helping my friend analyze some survey data from her baking class. She wanted to see if her new recipes were better than old ones based on taste scores given by different people. Since she had only two recipes, we rolled with the Z test for simplicity at first. But then she got ambitious and added more flavors! Suddenly we were in ANOVA territory since we had to compare multiple recipes at once.

Something else to remember: if your results show significance using ANOVA, you might need post hoc tests afterwards to find out which specific means differ from each other. It’s kind of like finding out who among your friends actually likes pineapple on pizza after saying they enjoy pizza in general!

So basically, here is how you’d decide:

- If you’re only looking at two groups and know variance—Z test all the way!

- If you’re exploring multiple groups—ANOVA is your go-to!

And that wraps it up! Remember that choosing between these two methods depends largely on your group sizes and goals. Good luck with your statistical adventures!

Alright, let’s chat about ANOVA, or Analysis of Variance, because it can get a bit technical but is so important in the world of science and research. You know those times when you’re trying to figure out if something is really making a difference? Like when you’re comparing the growth of plants watered with different types of fertilizers? That’s where ANOVA shines!

So, picture this: you’re at a friend’s barbecue. Everyone’s brought their own secret sauce recipe to try on the burgers. Some are sweet, some spicy. You bite into each one and think, “Wow, this one is so much better than that other!” But how do you really know which sauce reigns supreme? It’s a bit like what scientists do when they want to compare three or more groups.

The beauty of ANOVA is that it lets researchers be all scientific about it. Instead of just saying, “Yeah, sauce A tastes better,” they can analyze data from taste tests and see if the differences in taste are statistically significant. It’s like being super precise with your taste buds! When you have multiple groups—like different fertilizers for plants or different nutritional diets for animals—ANOVA helps sort all that out.

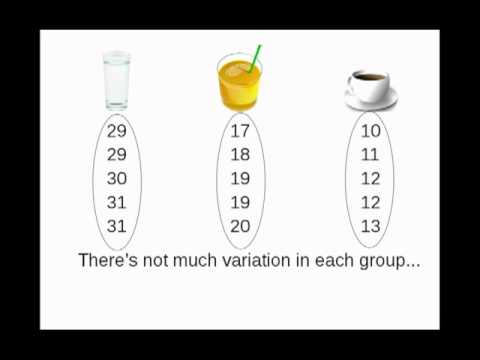

But here’s a little twist: ANOVA isn’t just about saying which group is the best; it’s about understanding how much variation there is within those groups too. So let’s say you’ve got two types of sauce that perform really well; ANOVA helps establish whether they’re equally awesome or whether one has an edge over the other.

I remember working on a school project once where we had to test how light affects plant growth using different colors of light bulbs. We measured height and leaf size every week for a few months. After analyzing our data with some help from our teacher using ANOVA techniques, we could see not only which color led to taller plants but also how consistent those results were across all our samples. It was eye-opening—kind of made me appreciate math in science more!

Basically, ANOVA lets scientists sift through their data more effectively without getting lost in numbers. When used right, it can change the game in experimental design and interpretation by providing clarity and cutting through noise. If you’ve got multiple variables at play (like temperature, lighting conditions, or ingredients), this technique helps make sense of everything.

And hey, while digging into statistics might sound dry at first glance, remember it’s all about uncovering truths in science! It connects dots and helps experimenters make informed decisions based on solid evidence rather than luck or gut feelings. So next time you’re curious about why something performs better than another thing in science experiments or research—you might just find an answer thanks to ANOVA! Isn’t it wild how numbers can tell such compelling stories?