You know what’s wild? There’s this thing called the Gram-Schmidt algorithm. Sounds fancy, right? But honestly, it’s just a way to take a bunch of numbers and make them line up nicely like good ol’ soldiers.

Picture your messy room—clothes everywhere, and you can’t find your favorite shirt. Wouldn’t it be great if there was a magical way to organize that chaos? Well, that’s kind of what this algorithm does with vectors in math.

Researchers are totally harnessing this little gem in all sorts of cool ways. From data analysis to computer graphics, it’s like the unsung hero doing the heavy lifting behind the scenes. Curious yet? Let’s dig in and see how it all works!

Understanding the Gram-Schmidt Process: Step-by-Step Guide for Linear Algebra Applications

The Gram-Schmidt process, huh? It sounds complicated, but it’s really just a neat way to take a set of vectors and make them orthogonal. So, you know, when I first tackled this in college, I totally felt like I was drowning in equations. But once it clicked, it became this cool tool I could use over and over. Let’s break this down together.

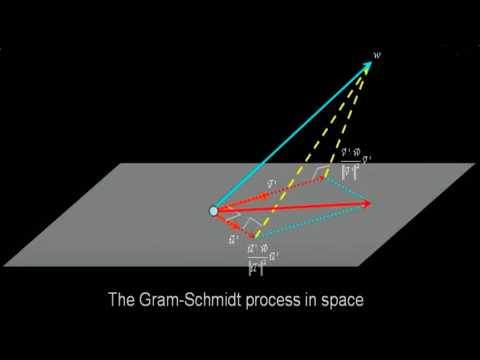

The main idea behind the Gram-Schmidt process is to start with a linearly independent set of vectors and turn them into an orthogonal (or even orthonormal) set. Why would you want that? Well, orthogonal vectors are super useful in lots of areas like data science and physics because they can make calculations way easier.

So here’s how we do it:

- Start with your original set of vectors. Let’s say you have two vectors: **u1** and **u2**.

- First vector stays the same. Your first vector becomes your first orthogonal vector: **v1 = u1**.

- Getting the second one right! To find the second orthogonal vector, **v2**, we need to adjust **u2** so it doesn’t overlap with **v1**:

- You’ll project **u2** onto **v1**: think about casting a shadow! The formula looks like this:

proj_v1(u2) = (u2 • v1 / v1 • v1) * v1

- Then subtract that projection from **u2**:

v2 = u2 - proj_v1(u2)

- You’ll project **u2** onto **v1**: think about casting a shadow! The formula looks like this:

- If needed, normalize! If you want not just orthogonal but also orthonormal vectors (vectors of unit length), simply divide each vector by its length:

e.g., if |v| = √(v • v), then normalize as e = v / |v|.

Let me tell you about the time I had to use this method for a project on signal processing. We were dealing with different signals that needed to be separated without interference. By applying the Gram-Schmidt process to our signal vectors, we managed to optimize our algorithms significantly! That was such a win.

Another cool aspect of the Gram-Schmidt process? It’s not just for two or three-dimensional spaces—in fact, it works in higher dimensions too! Just keep adding projections as necessary until you’ve processed all your vectors.

So remember: whether you’re working on computational problems or diving into research that involves any sort of data analysis or machine learning, harnessing this method will give you clarity and efficiency. Pretty sweet stuff!

Understanding the Mathematical Steps of the Gram-Schmidt Orthogonalization Process in Linear Algebra

So, let’s chat about the **Gram-Schmidt Orthogonalization Process**. This funky-sounding method is like a breath of fresh air in linear algebra, helping us create an orthogonal set of vectors from any given set. This matters because, in many math or physics problems, orthogonal vectors (which are perpendicular to each other) make calculations a lot simpler. It’s super useful in things like computer graphics and even data science!

Alright, here’s the deal. When you have a set of linearly independent vectors—let’s say **v1**, **v2**, and **v3**—the Gram-Schmidt process can help you get a new set that’s orthonormal. “Orthonormal” just means that the vectors are not only perpendicular but also have a length of one. Cool, right? Let’s break down the steps.

First off, you start with your initial vector:

u1 = v1

This means your first vector in the new set will just be the first vector from your original set—easy peasy.

Next up is:

u2 = v2 – proj_u1(v2)

What’s “proj_u1(v2)”? It’s the projection of vector **v2** onto **u1**. The formula to find it is:

proj_u1(v2) = (dot(v2, u1) / dot(u1, u1)) * u1.

Here, “dot” refers to the dot product—the sum of element-wise products of two vectors.

Once you’ve calculated that projection, subtracting it from **v2** gives you the adjusted second vector **u2** that is now orthogonal to **u1**.

Now we tackle:

u3 = v3 – proj_u1(v3) – proj_u2(v3)

This one requires a bit more effort since we take into account projections onto both previous vectors. Using similar logic as before:

proj_u_i(v3) = (dot(v3, ui) / dot(ui, ui)) * ui, for both **u1** and **u2**, helps ensure that our new vector is orthogonal to both.

After finding all these adjusted vectors (**u1**, **u2**, and maybe even more if you keep going), you’re not quite done yet! You gotta normalize them so they all have unit lengths (length of 1). Basically:

e_i = ui / ||ui||,

where ||ui|| is just the length of the vector calculated via square root sum formulas—like Pythagoras’ theorem but in multi-dimensions!

Finally, after normalizing each vector, you’ll end up with an orthonormal basis {e1, e2,…}.

Why does this matter? Well think back on those times when you really wanted clear solutions for complex problems—a tidy batch of orthonormal vectors saves loads of headaches in calculations!

All this might seem daunting at first glance but honestly? Just breaking everything into those small steps can turn what looks complicated on paper into something manageable—even kinda fun! Remember when I told you about projections and how they help create those neat angles? That’s what makes this process such a game-changer in linear algebra.

So next time you’re scrolling through some research or tackling a problem set that flirts with linear transformations or eigenvalues, remember you’ve got this handy tool called Gram-Schmidt sitting in your back pocket!

Understanding the Randomized Gram-Schmidt Process in Linear Algebra and Its Applications in Scientific Computing

The Randomized Gram-Schmidt process is a neat twist on the classic Gram-Schmidt orthogonalization technique in linear algebra. It’s all about turning a set of vectors into an orthonormal set but in a way that’s, you know, faster and often more efficient for big datasets. Let’s break it down a bit.

To start off, the traditional Gram-Schmidt process takes a list of linearly independent vectors and transforms them into an orthogonal basis. What this means is that each vector in the new set is perpendicular to the others—like how your friend’s hallway isn’t cluttered with random stuff; everything has its place.

Now, with the randomized version, we introduce some randomness to make this whole process quicker, especially when dealing with huge datasets or matrices. This typically involves first selecting a smaller number of vectors from your bigger set at random. By focusing on just a sample, you can generate your orthonormal basis without having to crunch through every single vector in detail.

In practice, this can really speed things up! It works by:

- Reducing computational cost: Instead of processing an entire matrix, you sample it.

- Improving numerical stability: The randomness can help avoid some common pitfalls like near-linear dependence among vectors.

- Maintaining accuracy: Despite reducing complexity, it still gets you pretty close to what you’d want from the full dataset.

Now picture this: imagine you’re doing research where you have thousands of data points from experiments—let’s say you’re studying protein structures or analyzing big climate data sets. Using randomized Gram-Schmidt can help you find patterns and correlations without needing to analyze all that data point by point.

One application lies in **scientific computing** where simulations often require large amounts of numerical data. Using this algorithm can yield better results faster when solving complicated equations or optimizing systems in areas like physics or engineering.

For instance, consider using it in **machine learning** algorithms where dimensionality reduction is key. If your dataset has more features (dimensions) than samples, randomized Gram-Schmidt helps create an efficient subspace representation while keeping essential information intact. That way, models train faster without losing critical insights.

So yeah, at its core, the Randomized Gram-Schmidt process gives researchers and scientists more tools for tackling complex problems efficiently while maintaining accuracy! Embracing randomness might sound counterintuitive at first but turns out it’s often just what’s needed to simplify things when numbers get big.

So, let’s chat about something that might sound a bit daunting at first—like the Gram-Schmidt Algorithm. Yeah, I know; it’s got that fancy name with a dash in it. But seriously, once you break it down, it’s not all that scary.

Picture this: you’re working on a research project that involves a bunch of data points, and they’re all kinda jumbled up in different directions. You want to make sense of them, sort of like organizing your messy room—finding a place for everything so you can actually find your stuff when you need it. That’s where Gram-Schmidt steps in.

Basically, what this algorithm does is take a bunch of vectors (which are just like arrows pointing in space) and turns them into an orthogonal set. Orthogonal means they’re all independent from each other; think of them as friends who don’t influence one another’s decisions—like when you’re trying to pick what movie to watch and everyone has their own ideas instead of just going along with the majority.

I remember fumbling through my first linear algebra class and struggling with concepts like vector spaces and linear independence. It felt so abstract! But then our teacher broke it down using real-life examples—like how musicians harmonize without stepping on each other’s toes. It clicked! The Gram-Schmidt process became less about numbers on a page and more about relationships—a way to ensure each vector could “stand alone” with its own unique direction.

In scientific research, especially fields like physics or computer science, harnessing this process can be super handy. By transforming data into an orthogonal set, researchers can simplify their calculations or even reduce noise in their data sets. Imagine trying to pick out subtle signals from all that background chatter; having clean vectors makes that noise easier to push aside.

Of course, there are other methods out there too—it’s like having different tools in your toolbox—but Gram-Schmidt has this charm to it because of its systematic approach. It feels orderly and logical, which is comforting in the chaos that sometimes comes with research.

So yeah, while the name might sound intimidating at first glance, embracing concepts like the Gram-Schmidt Algorithm can be incredibly rewarding—and honestly? Pretty cool when you see how they play out in real-world applications! And who knew organizing vectors could be so relatable?