You know that feeling when you’re trying to figure something out, like why your plants keep dying even though you water them? You’re doing all the right things, but nothing seems to work. That’s kind of how it is with analyzing data sometimes.

So here’s the scoop: linear regression is like your go-to buddy for understanding relationships in data. It’s great for predicting stuff and making sense of numbers, but sometimes it leaves us hanging. What if I told you there’s a tool called ANOVA that can actually kick things up a notch?

Think of ANOVA as that friend who always has an extra pair of shoes on hand for those unexpected adventures. It helps you see if the changes in your data are significant or just random noise. Pretty cool, right?

Mixing these two together? Well, that’s where the magic happens! You’re about to dive into how using ANOVA can give you deeper insights from linear regression, and trust me—it’ll change the way you think about data analysis!

Leveraging ANOVA to Optimize Linear Regression Analysis: A Practical Example in Scientific Research

Alright, let’s break this down: ANOVA and linear regression. First off, you might be like, what are those? So, ANOVA stands for Analysis of Variance. It’s a technique that helps you figure out if there are significant differences between groups. Linear regression, on the other hand, is all about modeling relationships between variables. You get that?

Now, combining these two methods can really amp up your research game. Here’s the deal: when you’re running a linear regression analysis, you’re often trying to predict something based on one or more variables. But just having those variables isn’t always enough to understand the bigger picture. That’s where ANOVA struts in.

Think of it this way: imagine you’re studying the effects of different fertilizers on plant growth. You have three types of fertilizers (let’s say A, B, and C) and you want to see which one works best. Using ANOVA, you’d compare the mean growth of plants with each fertilizer to see if there’s a significant difference among them.

If ANOVA shows that at least one fertilizer group is significantly different from the others, you can follow up with linear regression to look deeper into how factors like sunlight or water levels could also impact that growth. So it becomes a powerful combo! You start linking each fertilizer type with its effects while controlling for those other variables.

- ANOVA tells you if differences exist between groups.

- Linear regression helps model those relationships while considering additional factors.

- The combination enhances your insights about multiple influences on outcomes.

A practical example here would be a study looking at how smoking affects lung capacity across different age groups. Let’s say we focus on three age brackets: Young, Middle-aged, and Elderly. First up: run an ANOVA test to check if lung capacity differs significantly between these ages among smokers versus non-smokers.

If the results highlight significant variation across ages and smoking status within lung capacity data—bam! Now you can use linear regression to take things further. You might want to explore how age interacts with smoking frequency or environmental factors like pollution levels affecting lung health over time.

This approach provides a robust framework for drawing meaningful conclusions in your research—you know? It digs deeper than just surface-level comparisons or predictions!

The real beauty here lies in observation. Take what you’ve learned from ANOVA and apply it when modeling with regression—it’s a cycle of learning! Sooner or later you’ll find yourself uncovering insights you’d have missed without this dual approach!

Understanding the ANOVA Table in Simple Linear Regression: A Comprehensive Example in Scientific Research

So, let’s chat about something that’s often a bit tricky: the ANOVA table in simple linear regression. You might be thinking, “What’s that all about?” Well, don’t worry—I’ll break it down for you.

To start with, ANOVA stands for **Analysis of Variance**. It’s a statistical method used to compare the means of different groups. In the context of simple linear regression, we use ANOVA to figure out how much of the variance in our dependent variable can be explained by our independent variable.

Now, what does that mean? Let’s say you’re studying how study time affects test scores. In this case:

- Dependent Variable: Test scores (what you want to predict)

- Independent Variable: Study time (the factor affecting your prediction)

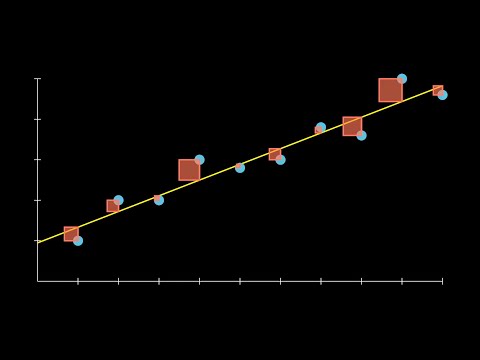

When you run a simple linear regression on this data, you get an equation that looks something like this: Test Score = b0 + b1 * Study Time. Here, b0 is your intercept and b1 is the slope of the line—kind of like how steeply your score goes up as study time increases.

Now comes the fun part: the **ANOVA table**. This table helps summarize how well your model explains the variation in test scores based on study time. You’ll typically see several components in it:

- Sum of Squares (SS): This tells us how much total variation exists in our dependent variable and how much of this variation is accounted for by our model.

- Degrees of Freedom (df): This part relates to the number of independent pieces of information we have; for example, in a simple linear regression with one predictor, you’d have one degree of freedom for regression and n-2 for residuals.

- Mean Squares (MS): These are calculated by dividing sum of squares by their corresponding degrees of freedom. It helps standardize these values.

- F-statistic: This value is super important! It compares variance explained by your model to unexplained variance. A higher F-statistic suggests that your independent variable significantly explains variation in your dependent variable.

- P-value: This indicates statistical significance. If it’s less than a certain threshold (often ≤0.05), it suggests there’s strong evidence that study time has an effect on test scores.

Here’s where things can get real interesting! Let’s say after running your analysis, you end up with an F-statistic that has a P-value of 0.02. What does this mean? Well, it suggests there’s quite likely a relationship between study time and test scores—and since it’s below our threshold, we could say our findings are statistically significant!

So imagine you’re doing this research because you’re passionate about helping students improve their performance—and seeing those numbers light up confirms that you’re onto something meaningful!

Isn’t it cool how just a few numbers can tell such an impactful story about learning?

Basically, understanding ANOVA tables in simple linear regression sheds light on whether changes in one thing cause changes in another—like studying longer actually leading to better test grades!

To sum up:

- The ANOVA table provides essential insights into the effectiveness of your regression model.

- Certain elements like F-statistic and P-value help gauge if there’s a real relationship at play.

- This analysis isn’t just dry figures; it can be pivotal for making education better!

And just like that, you’ve got a clearer picture! So next time you hear someone talk about ANOVA or regression models at a party—yup, they’re not boring at all!

Enhancing Linear Regression Insights with ANOVA: A Scientific Approach to Data Analysis

Linear regression and ANOVA—what a duo! They each play a role in data analysis that can really light up the path to understanding our data better.

So, you might be wondering: what’s linear regression all about? It’s a statistical method used to understand the relationship between two variables, basically seeing how one variable changes when another changes. Think of it like trying to figure out how much more money you could earn depending on the hours you work. More hours could mean more cash, right?

But here’s the catch. Sometimes our data isn’t just about two variables—it can involve multiple groups or categories. And that’s where ANOVA, or Analysis of Variance, comes strutting in. It helps us compare means across different groups and see if at least one group is different from the others.

Now, combining linear regression with ANOVA can enhance our insights and give us a clearer picture of what those relationships actually look like. Here’s how they work together:

- Understanding Group Effects: Say you’re studying student test scores across several classes. Linear regression would help predict scores based on hours studied, but ANOVA tells you if there’s a significant difference between classes.

- Interpreting Residuals: When you run a linear regression model, you’re looking at residuals—those are the differences between your predicted and actual values. Using ANOVA on these residuals can help check if they are consistently spread out or if there are patterns indicating something amiss.

- Testing Interactions: Sometimes variables interact in ways that affect your outcome. If you’re looking at study time and type of study method together using linear regression, ANOVA can help analyze whether those methods make a significant difference in performance.

Consider this: imagine you’ve done some research on how exercise impacts weight loss among different age groups—like teens versus older adults—using linear regression to see how changes in workout frequency affect weight loss. But hey, wouldn’t it be handy to know if one age group loses weight differently than the other? That’s where ANOVA shines; it provides insights into whether age indeed plays a role.

It’s not just about figuring out relationships; it’s also about filling in those little gaps of uncertainty we often face with data analysis. By applying ANOVA to our models after running linear regression, we’re not only making sense of individual predictors but also gaining insight into where differences lie among groups.

So when you’re analyzing your data next time, think about adding that sprinkle of ANOVA magic on top of your linear regression insights! Seriously, who wouldn’t want more clarity when sifting through numbers and trends? It makes everything feel way more connected—and that should be satisfying for any curious mind out there!

So, you know how sometimes when you’re trying to figure out why something happens, it feels like a puzzle with a couple of missing pieces? Well, that’s kind of the vibe when you’re diving into data analysis—especially with linear regression and ANOVA. It’s like having two tools that work really well together but often don’t get the spotlight they deserve.

Imagine you’re doing some research on how different factors like study time, sleep, and caffeine intake affect student grades. Linear regression is great because it helps you see relationships. Like, if you put in more hours studying, do your grades improve? But then you’ve got ANOVA (which stands for Analysis of Variance) hanging around too, and that’s where things get interesting.

ANOVA lets you compare multiple groups at once. So rather than just looking at one thing at a time—like comparing students who sleep 6 hours versus those who sleep 8—you can see if there are significant differences in grades across all study times or sleep levels. Say you find out that students studying between 3-5 hours score differently than those pulling all-nighters. That extra info is gold! It adds another layer to your understanding.

Let me tell you a little story here. A friend of mine worked on her thesis about exercise and academic performance. She just did a simple linear regression first and got some decent insights about how regular exercise correlated with better grades. But when she brought in ANOVA to look at different types of exercise—like cardio versus strength training—the results totally shifted! She discovered that strength training showed even more significant associations with grades than she ever expected. It was like turning on a light in a dim room; suddenly everything was clearer.

So basically, while linear regression gives you the relationship power between your variables, ANOVA helps enhance those insights by allowing comparisons across multiple groups simultaneously. It’s like having a wider lens when you’re trying to capture the full picture—it helps prevent missing critical details.

And here’s the catch: using them together isn’t just smart; it makes your research a whole lot richer and deeper. You start seeing patterns that might not have popped up otherwise! And isn’t that what research is all about? Exploring the unknown and finding those hidden gems?