You ever forget where you left your keys? Like, one moment they’re in your hand, and the next, poof! Gone. It’s kind of like how our brains work. They remember stuff, forget some things, and sometimes surprise us with random memories—like that time you tripped over your shoelaces in front of your crush.

Now, imagine trying to teach a computer to do something similar. That’s where Long Short Term Memory networks come in. So cool, right? They help machines remember important details while not getting tangled up in all the noise—like a super smart friend who can retain info from high school but won’t recall every boring history lecture.

But how do they actually work? Let’s dig a little deeper into this brainy tech and see what makes it tick!

Leveraging Long Short-Term Memory Networks for Advanced Machine Learning Applications in Python

Long Short-Term Memory networks, or LSTMs for short, are a type of recurrent neural network that’s great at processing sequences of data. Think of them as a memory aid for machines, allowing them to remember information over long periods. Sounds neat, huh?

LSTMs are super useful in various advanced machine learning applications. Why? Well, they handle time-series data really well! Whether you’re predicting stock prices or analyzing speech signals, LSTMs can recognize patterns over time. It’s like they’ve got a special knack for remembering past inputs while keeping an eye on new ones.

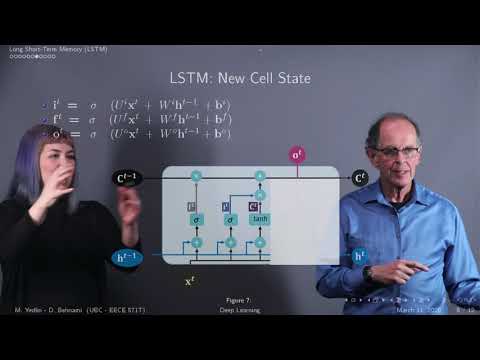

But here’s the kicker: traditional neural networks often struggle with long sequences because they can forget earlier information. So that’s where LSTMs shine! They have this built-in mechanism called **gates**, which control the flow of information. Imagine having a door that only opens when you really need it to let some info through—that’s basically what these gates do.

Take an example from natural language processing. When you’re typing a text message, your brain remembers what you just said to keep the conversation flowing smoothly. Similarly, an LSTM maintains context in sentences by remembering prior words and how they relate to what comes next. It can help with tasks like translation or even generating coherent text.

Now, if you’re excited about diving into code, working with LSTMs in Python is pretty straightforward thanks to libraries like TensorFlow and Keras. You’d typically start by building your LSTM model by defining layers and parameters based on your specific needs.

When coding something like this:

“`python

from keras.models import Sequential

from keras.layers import LSTM, Dense

model = Sequential()

model.add(LSTM(50, input_shape=(timesteps, features)))

model.add(Dense(1))

model.compile(loss=’mean_squared_error’, optimizer=’adam’)

“`

This simple snippet sets up an LSTM with 50 memory units (that’s those special neurons we talked about) and prepares it for training on your data.

Training those networks typically involves feeding them sequences of data so they learn patterns over time. You need enough training examples—for instance, loads of text documents if you’re working on language tasks—to really get those memory gates working optimally.

Also important is adjusting hyperparameters like the number of layers and dropout rates to avoid overfitting—kind of like making sure you don’t memorize answers but can actually understand concepts!

And here’s something cool: researchers are finding that combining LSTMs with other models—like convolutional neural networks (CNNs)—can improve performance even further! This hybrid approach lets you tackle complex problems across different domains.

So basically, Long Short-Term Memory networks open up a whole new world for advanced machine learning applications in Python. With their ability to remember important information across time steps while ignoring irrelevant noise, they’re just fantastic when it comes to making sense of sequential data—be it audio recordings or stock market trends.

In the end? It’s all about understanding how these models work so you can leverage their power effectively!

Enhancing Machine Learning Capabilities through Long Short-Term Memory: A Comprehensive PDF Guide

Sure thing! Let’s chat about enhancing machine learning with something called Long Short-Term Memory, or LSTM for short. This is a pretty interesting area in the world of artificial intelligence, especially if you’re looking to make your models better at understanding sequences of data.

So, **LSTM** is a type of recurrent neural network (RNN). But, what does that even mean? Well, RNNs are designed to work with sequences of data—like sentences, music notes, or even stock prices over time. The cool thing about LSTMs is that they can remember information for a longer time than regular RNNs. It’s as if they’ve got a great memory for details while also being able to forget irrelevant stuff—pretty neat, right?

Imagine you’re trying to predict the next word in a sentence. If you only look at the last few words, you might miss the context set by earlier words. That’s where LSTMs shine! They can keep track of important information from much earlier in the sequence while filtering out what’s not needed.

Here are some key features of LSTMs:

- **Memory cells:** These store information over long periods. Think of them like sticky notes in your brain that remind you of important things.

- **Gates:** There are three main gates—input gate, forget gate, and output gate. These gates control what information goes in and out. It’s like having a bouncer at a club deciding who gets in!

- **Long-range dependencies:** LSTMs excel at capturing relationships between events that are far apart in time. This is super useful for tasks like language translation or analyzing trends in stock market data.

Now let me tell you a little story to illustrate how this works in real life. Picture this: you’re watching your favorite show on TV, and there’s a huge plot twist revealed after several episodes—a twist that ties back to something mentioned way earlier! If you could only remember just the last couple of episodes, you’d probably be lost when it comes time for that big reveal. That’s how regular RNNs might struggle; they forget important context too quickly. On the other hand, LSTMs would keep track of all those key moments leading up to it!

In practice, LSTMs have been used in various applications:

- **Speech recognition:** They help systems understand spoken language by remembering context from previous words.

- **Text generation:** Ever seen AI write stories? That’s often powered by LSTM networks predicting one word after another based on prior text.

- **Medical diagnosis:** By analyzing patient data over time, they assist doctors in predicting potential health risks.

It’s like giving machines an extra brain that can remember things longer than just one dinner conversation!

To sum it all up—LSTM networks kick some serious butt when it comes to handling sequential data because they combine memory and filtering mechanisms effectively. So if you’re into machine learning or just curious about how computers learn patterns over time, understanding LSTMs is definitely worth your while!

If anything feels fuzzy or unclear along this journey into machine learning with LSTMs—or if you’ve got questions on specific points—don’t hold back!

Advancements in Long Short-Term Memory Supervised Sequence Labeling Using Recurrent Neural Networks in Scientific Research

Recurrent Neural Networks, or RNNs for short, have become a big deal in the world of machine learning. They’re especially useful when it comes to processing sequences of data. Think of when you’re scrolling through your social media feed – it’s all about understanding the context of what came before as you read. That’s where **Long Short-Term Memory (LSTM)** comes into play.

LSTMs are a special kind of RNN designed to remember information for long and short periods. Imagine you’re reading a story. You need to remember details from the beginning while keeping track of what’s happening right now. LSTMs help machines do just that! Here’s how they work:

- Memory Cells: They use structures called memory cells that can store information. These cells decide what to keep around and what to forget, so they can maintain relevant context.

- Gates: LSTMs have three gates: input, output, and forget gates. The input gate controls what new information goes in; the forget gate decides what’s no longer relevant; and the output gate determines what information is sent out.

This capability makes LSTMs super cool for tasks like **sequence labeling** in natural language processing (NLP). For instance, if we wanted a machine to label parts of speech in a sentence—the nouns, verbs, adjectives—it needs to understand not just individual words but also their relationships over time.

In scientific research, this is huge! Consider drug discovery: researchers can analyze sequences of chemical compounds to predict which ones might work best together. By using LSTM networks for sequence labeling in these contexts, we could identify promising candidates much faster than with traditional methods.

One emotional anecdote comes to mind—a researcher shared how their breakthrough came after years of hard work—a particular LSTM model helped them finally see patterns in their data that were invisible before. It was like finding buried treasure after searching for so long!

And look, while traditional algorithms might struggle with long dependencies—like remembering the start of one complex theory while analyzing another—LSTMs shine because they can manage that complexity without losing vital context.

So yeah, advancements in LSTM application are truly reshaping our approach to various fields within scientific research. From better understanding language intricacies to making sense of complex biological data—you get a sense that we’ve only scratched the surface here! It’s exciting stuff; who knows where it’ll lead us next?

So, let’s chat about Long Short Term Memory, or LSTM for short. It sounds pretty technical, right? Like something out of a sci-fi movie. But stick with me! It’s actually a pretty cool concept in the world of machine learning.

Imagine you’ve got a kid learning to speak. At first, they might just babble and make sounds that don’t make sense. But over time, they start connecting words with meanings—like maybe “mama” means their mom is around. That ability to remember what was said moments ago and relate it to what’s happening now? That’s what LSTM does for machines.

I remember the first time I saw an AI program predict the next word in a sentence based on context. Seriously, it blew my mind! This wasn’t some random guess; it had to pull from all this past information—like what words usually come together in English, or even how the tone of a conversation might shift.

So why does this matter? Well, think about how much data we generate every day: texts, tweets, emails—all those little bits of information contain context that’s super important for understanding meaning. Without LSTM in play, machines would struggle to keep track of all that nuance. They’re like toddlers trying to catch up in a conversation where everyone else is already advanced.

But here’s where it gets even more interesting: using LSTMs can lead to more advanced capabilities in natural language processing and even things like predictive text or speech recognition. The more these systems learn from past data—the rhythms and nuances—they get better at mimicking human-like understanding.

Of course, it’s not without hurdles. Machines don’t experience memory like people do; they can forget too easily or get confused by irrelevant details. It’s kind of like when you’re trying to remember someone’s name but your brain is flooded with random thoughts about lunch instead!

Anyway, as we harness this incredible tool called LSTM for machine learning, we’re pushing into frontiers I never imagined possible just a few years ago. Who knows? Maybe one day they’ll understand our feelings too! What do you think? Isn’t it wild where technology is heading?