So, picture this: you’re in a dense forest, totally lost. There are paths leading everywhere! You could go left, right, or straight ahead. And then, out of nowhere, a wise old tree shows up and gives you directions. That’s kinda what decision trees do— they help us navigate through complicated data.

You know those moments when you feel overwhelmed by choices? Like deciding what to have for dinner or which movie to watch? Decision trees break things down into simple yes-or-no questions. They guide you step-by-step until you reach a conclusion.

And trust me, it’s not just some nerdy math thing; it’s super helpful for scientists sifting through mountains of data. It’s like having that trusty compass in the woods, leading you where you need to go. So let’s talk about how these handy tools can help us interpret scientific data in ways that make sense!

Exploring the Role of Decision Trees in Data Science: Applications and Insights

So, let’s talk about decision trees. These are like those family trees you might have seen, but instead of showing who your relatives are, they help us make decisions based on data. It’s like having a little helper that sorts through information to guide you to the right choice.

What is a Decision Tree?

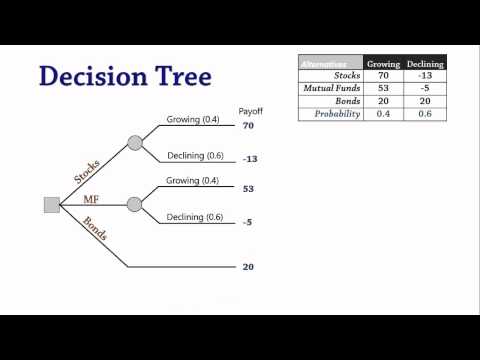

A decision tree is a flowchart-like structure where each internal node represents a test on an attribute (think of it like asking questions). Each branch represents the outcome of that test, and each leaf node (the end of the branch) contains a final decision or classification. It sounds simple, and it kinda is!

Imagine you’re trying to choose what snack to eat based on different preferences: Do you want something sweet or salty? If sweet, do you prefer chocolate or fruit? You can visualize your choices as branches leading to different snack options. That’s basically how decision trees work!

How Does it Work?

The way these trees are built is pretty cool. They use algorithms—basically, fancy math rules—to split data at each node based on certain conditions. The goal? To create groups that are as different from each other as possible. It’s like sorting your laundry: whites here, colors there. One common algorithm for this is ID3, which stands for Iterative Dichotomiser 3—you don’t need to remember that name though!

Applications in Data Science

Now, let’s get into how these bad boys are used in real life:

- Medical Diagnosis: Decision trees can help doctors determine what illness someone might have based on symptoms. For example, if a patient has a fever and cough, the tree might suggest checking for flu.

- Customer Segmentation: Businesses use them to figure out different customer types by analyzing buying habits. It’s like finding out if you’re more likely to buy coffee or tea!

- Risk Assessment: In finance, they assess risk levels for loans by looking at past data about users’ credit scores and repayment history.

- Email Filtering: Think about spam filters! Decision trees can help decide whether an email goes into your inbox or your spam folder.

These examples show just how versatile decision trees can be! They take complex datasets and break them down into relatable decisions.

Benefits of Using Decision Trees

One reason decision trees are so popular in data science is their simplicity. They’re easy to understand—you don’t need a PhD in statistics! Plus:

- No Need for Data Normalization: You don’t have to tweak your data too much before using it.

- Easily Visualizable: You can draw them out, which makes explaining results simpler.

- Naturally Handles Both Numerical and Categorical Data: Whether you’re dealing with quantity (like height) or quality (like color), they got you covered!

But hey—nothing’s perfect! Sometimes they can get too complex if you give them too much data—resulting in overfitting. It’s kind of like packing a suitcase; if you throw everything in without thinking about essentials, it gets messy and hard to carry.

Anecdote Time!

I remember one time I was helping my buddy figure out what car he should buy based on his budget and needs—for instance: was he looking for something fuel-efficient or more powerful? We made our own little decision tree right there on paper! By just asking simple questions about preferences and priorities—we actually ended up narrowing down his options quite nicely! He ultimately found exactly what he wanted without feeling overwhelmed by choices.

In essence, decision trees serve as friendly guides through the heaps of information we face today—from health decisions to financial choices—they’re valuable tools that make sense of chaos. So next time you’re faced with loads of info and need clarity? Perhaps channel your inner mathematician with some tree-thinking!

Exploring the Capabilities of ChatGPT in Generating Decision Trees for Scientific Applications

Let’s talk about decision trees and how tools like ChatGPT can help us generate them for scientific applications. Decision trees are, at their core, a method used to make decisions based on data. They’re like flowcharts that guide you through choices by asking questions along the way.

What exactly is a decision tree? Well, imagine you have a bunch of data points about plants—like height, leaf color, flower type—and you want to figure out which conditions affect growth the most. A decision tree would start at the top with a question (like “Is the leaf color green?”) and branch off based on your answers. It keeps branching until it leads to conclusions or predictions. Pretty neat, huh?

Now here’s where ChatGPT comes into play. You could ask it to help generate these decision trees based on your specific data sets. By analyzing patterns in your input data, ChatGPT can suggest questions that should be asked first or even create the structure of your decision tree automatically.

A few key benefits of using ChatGPT for this task include:

- Speed: It saves time in constructing trees since it can process information quickly.

- Flexibility: You can easily tweak your inputs and get different kinds of trees tailored to what you’re interested in.

- Accessibility: Using everyday language makes it easier for scientists who might not be experts in data science.

Let’s say you’re studying how different fertilizers affect plant growth and use a dataset with growth measurements after applying various fertilizers over several months. You feed this data into ChatGPT and ask for a decision tree that identifies which fertilizer works best under certain conditions.

What happens next? The model processes all that info and spits out suggestions like:

1. “Was Fertilizer A applied?”

2. “Did the temperature exceed 20°C?”

3. This way, you get insights into how those conditions impact plant performance.

Sounds simple enough! But wait, there’s still more! One cool thing about decision trees is they also help visualize important relationships within your data—so you can actually see how different factors interact with one another.

However, there’s a catch: while tools like ChatGPT can generate these trees quite efficiently, they rely on the quality of your input data. If your dataset is flawed or biased in any way, then the resulting decisions might lead you astray.

In short, using tools like ChatGPT for generating decision trees opens up some exciting possibilities for scientific research! The blend of technology and science helps make our findings clearer and more accessible—not just for researchers but also for anyone curious about the world around them.

So next time you’re drowning in data trying to figure out what it all means? Maybe give this approach a thought! You’ll find that merging AI with traditional methods isn’t just smart; it’s downright exciting too!

Evaluating the Interpretability of Decision Trees in Scientific Research: Insights and Implications

Decision trees are a super interesting tool when it comes to understanding data in scientific research. Basically, they help you visualize decisions and the possible consequences that come from those choices. Think of them like a map that guides you through a forest of options and outcomes.

One big plus about decision trees is their **interpretability**. You can look at a tree and, without needing a PhD in statistics, get the gist of how different variables affect your decisions. The structure is pretty straightforward: there’s a root node that branches out into various paths, leading to different outcomes. It’s like following a recipe where each step leads you to the next one based on what you choose.

But here’s where it gets kind of tricky. Just because decision trees are easy to understand doesn’t mean they’re always the right choice for every research scenario. Sometimes they can overfit the data, which means they get so specific about the training data that they fail to perform well on new or unseen data. It’s like memorizing answers for an exam but then struggling when faced with unexpected questions.

Also, **evaluating interpretability** involves more than just looking at how pretty the tree looks. It’s essential to consider how well it captures real-life complexities and whether it can generalize findings beyond just the data set you used to build it. So, while these trees can break things down nicely, if they miss out on underlying patterns or interactions between variables, then their usefulness takes a hit.

When using decision trees in scientific contexts, keep these points in mind:

- Validation: Make sure you validate your model with fresh data.

- Simplicity vs complexity: Strike a balance between an understandable model and one that accurately reflects reality.

- Feature importance: Use decision trees to highlight which variables matter most.

The implication here is huge! When researchers understand both what makes their decision tree work and where it might fail, they’re better equipped to draw meaningful conclusions from their data. Also, involving stakeholders—like patients in medical studies—can help them see why certain decisions were made based on tree outputs.

To wrap it up, evaluating decision trees isn’t just about saying “look at how cool this is!” It’s about ensuring that these tools genuinely help clarify complex problems rather than adding confusion into the mix. The journey through scientific research often feels like wandering through uncharted territory; having clear maps—like decision trees—can be invaluable in navigating those twists and turns!

Alright, let me tell you about decision trees, which, honestly, are kinda cool when you think about it. You know how sometimes you have to make choices based on a bunch of factors? Like deciding what to wear based on the weather, or picking a movie based on your mood? Decision trees do that but for data. They help scientists sort through a ton of information to make sense of things quicker.

The first time I saw a decision tree was during a lab class. My instructor drew one on the board, and it was like watching a flowchart come alive! Each branch represented different choices or outcomes based on the data we had. I remember thinking how it’s like playing “choose your own adventure” – except instead of going on an exciting quest, you’re figuring out the best way to interpret some pretty serious scientific data.

So, how does this whole thing work? Basically, at each node (which is like a decision point), you ask a question that splits your data into smaller groups until you reach an endpoint—a conclusion or prediction. Let’s say you’re looking at plant growth: You might start by asking whether there’s enough sunlight. If yes, you go down one path; if no, another. It’s systematic but also super intuitive!

What’s neat is that decision trees aren’t just for simple questions; they can handle really complex situations too! Like predicting disease outbreaks or understanding climate change impacts. And yeah, while they’re great for seeing the big picture quickly, they can get complicated with lots of branches—kinda like my family tree after my fourth cousin twice removed!

But here’s something to watch out for: sometimes these trees can get overcomplicated and end up being too specific instead of generalizable—like trying to read every page in that “choose your own adventure” book instead of just picking one story. So there’s definitely an art to using them well.

In short, decision trees are tools that let scientists take multifaceted problems and break them down into manageable chunks. They’re not perfect by any means but can be incredibly helpful when navigating through the wild world of scientific data interpretation. It’s just fascinating to see how something so straightforward can lead us down some pretty intricate paths in research!