So, here’s a little story for you. I once tried to sort my massive socks collection. Seriously, it was like walking into a sock museum gone wrong. I had patterns, solids, fuzzy ones, and some that just made me wonder why I even bought them!

The thing is, organizing all those socks feels a lot like tackling data classification. You’ve got all these different types, and you want to make sense of it all. That’s where RBF SVM kicks in—yeah, it’s not just a bunch of jumbled letters.

Imagine having a super smart buddy who helps you group your socks based on color or style without breaking a sweat. That’s what RBF SVM does with data! It’s pretty clever and can tackle tricky classification problems with ease. So let’s unpack this cool tool and see how it makes sense of the chaos!

Exploring Support Vector Machine Applications in Scientific Data Analysis: A Comprehensive Example

So, let’s talk about Support Vector Machines (SVMs). They’re like the cool tool in your science toolbox when it comes to tackling data classification challenges. They help you understand complex data by creating a clear separation between different classes. Think of it as drawing a line in the sand, but in a multi-dimensional space!

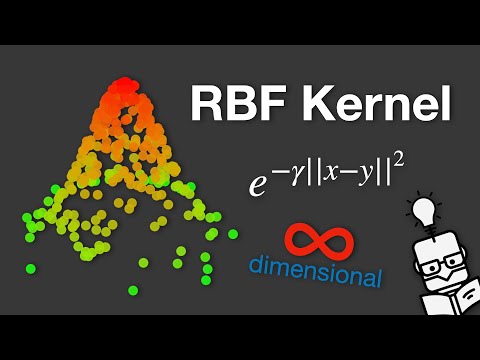

Now, one powerful version is the Radial Basis Function (RBF) SVM. This method transforms your data into a higher dimension where it’s easier to find that separating line or hyperplane. You see, sometimes our data doesn’t lay out nicely. It’s all jumbled up and messy. That’s where RBF comes in; it can handle non-linear relationships effectively.

Imagine you’re trying to classify fruits based on some features like color and size. You might have apples and oranges overlapping on a graph—confusing, right? But with RBF SVM, you can twist that graph into another dimension and create a clearer boundary.

Here are some key points about RBF SVM applications:

- Image Classification: It can identify objects in images by classifying pixels based on their characteristics.

- Bioinformatics: Researchers use it for classifying genes or proteins, helping to understand diseases better.

- Finance: Companies use SVMs for credit scoring or fraud detection by analyzing patterns in transaction data.

Now let me give you an example that really brings this home. Picture a doctor trying to identify whether a patient has a certain disease based on symptoms. The data might come from factors like age, weight, blood pressure levels—all mixed together without any clear pattern. Using RBF SVM, they can separate healthy patients from those needing treatment by finding the optimal boundaries based on complex relationships between these variables.

What’s more amazing is how flexible RBF is! Depending on the parameters chosen for the model (like the width of the radial basis function), you can tweak how sensitive your classifications are to different features. This adaptability helps researchers hone in on what matters most in their specific scenarios.

In summary, exploring Support Vector Machines—especially with the Radial Basis Function—is like having a versatile friend who helps untangle complicated problems in scientific data analysis. Whether you’re sorting through images or making sense of health-related information, RBF SVM has got your back!

Exploring Support Vector Machine Techniques: Comprehensive PDF Guide for Scientific Research and Applications

Support Vector Machines (SVM) are like trusty sidekicks in the world of data classification challenges. They help you separate different classes in your data by finding the best boundary, or “hyperplane,” that divides them. It’s pretty neat when you think about it!

Now, RBF SVM, which stands for Radial Basis Function Support Vector Machine, takes this idea a step further. The RBF kernel is super popular because it can handle complex relationships in data that’s not linearly separable. You know those messy datasets where things overlap? RBF shines there!

What happens is that the RBF kernel maps your data into a higher-dimensional space, where it becomes easier to find that perfect hyperplane. It’s like having a magic box that transforms your jumbled mess into a neatly arranged scenario. So you can actually separate your classes more effectively.

Here are some key points about RBF SVM:

- Flexibility: It adapts well to both linear and non-linear data.

- Sensitivity to Parameters: The efficiency of an RBF SVM heavily relies on two parameters: the C value and the kernel width (often denoted by γ). These need careful tuning.

- Applications: From image recognition to spam detection, RBF SVM has proven its worth across numerous domains.

- Simplicity in Implementation: Most programming languages have libraries ready for SVMs, so setting one up isn’t rocket science!

It’s interesting how practical this stuff really is. I remember a time back in college when my group project involved classifying images of plants based on their species. We had tons of pictures that looked kinda similar but were actually different! Using RBF SVM helped us classify them with decent accuracy after some parameter tuning—you could feel the excitement grow as we saw our model perform better!

Another cool aspect of SVMs is that they inherently avoid overfitting by focusing on the support vectors—those critical points on either side of the hyperplane. This way, they generalize well to new data, and that’s something every researcher wants.

To wrap things up, think about why you might want to explore Support Vector Machine techniques like RBF. It’s powerful for tackling classification tasks where traditional models may fail due to complexity or non-linearity in the data distribution.

Overall, whether you’re working in academia or industry, understanding these tools can give you an edge in making informed decisions based on your data insights!

Understanding Support Vector Machines: A Detailed Example in Scientific Data Analysis

Alright, so let’s chat about Support Vector Machines, or SVMs for short. This technique is pretty powerful when it comes to classifying data, especially in scientific fields where you want to make sense of messy information. It might sound a bit technical, but I’ll break it down for you.

Imagine you’re trying to separate two types of fruits: apples and oranges. You have tons of data points—like size, weight, and color—and you want to draw a line (or a boundary) that best separates these two categories. That’s where SVM comes into play! It finds that line in a way that maximizes the distance from the closest apple and orange to this line.

Now, here’s where it gets interesting: Sometimes the data isn’t just laid out nicely on a flat surface; it can be all mixed up in three-dimensional space or more complicated shapes. What if you can’t just draw a straight line? Well, SVM can handle this too! The technique uses something called the kernel trick.

So, let’s say we decide to use the Radial Basis Function (RBF) kernel—a fancy term for a method that helps us deal with non-linear data structures. This kernel basically transforms our original feature space into a higher dimension where it’s easier to separate our apples from oranges with a flat surface.

Here’s how it works:

- Transforming Data: The RBF kernel takes each fruit’s features and maps them onto a new space. Think of bending reality so apples and oranges are far apart!

- Identifying Support Vectors: Out of all your data points, some are closer to the boundary than others—these are your support vectors. They’re critical because they help define your classification margin.

- Maximizing Margin: The goal is to find that optimal boundary which keeps as far away from these support vectors as possible while still correctly classifying both fruits.

Let me share an example for clarity: Say you collected measurements of various fruits and plotted them out on graph paper. You could end up with clusters that look like messy blobs instead of neat piles. With RBF SVM, we can create curves instead of lines! It wraps around the clusters so effectively that even if they look intertwined at first glance, we’d still know how to classify new fruits based on their features.

One time I worked on analyzing gene expression data using SVMs—it was fascinating! Each gene’s expression level could be thought of as another dimension in our dataset. By using RBF kernels, I was able to classify different cancer types based on their genetic profiles with reasonable accuracy.

In summary:

- SVMs are robust classifiers: They work great in high dimensions.

- The RBF kernel allows flexibility: Perfect for non-linear relationships.

- Support vectors are key players: They determine our decision boundaries!

So yeah, understanding SVMs and especially RBF kernels can really help pave the way for tackling complex datasets in science and beyond! Just remember it’s all about finding that best separating boundary—no matter how twisted or turned your data might be!

So, let’s talk about RBF SVM, or Radial Basis Function Support Vector Machine. Yeah, it sounds super technical, but stick with me here! It’s one of those things in the world of data science that can seem intimidating at first but really packs a punch when you understand it.

Imagine you’re trying to organize your closet. You’ve got a mix of clothes: t-shirts, jeans, jackets—everything’s jumbled together. Now, if you just shove everything into one corner, good luck finding what you need! That’s basically how data works too. When you have tons of information swirling around—like customer preferences or images—you need a smart way to sort through it all.

This is where RBF SVM comes in. It’s like having a super-sophisticated friend who loves organizing things by finding patterns and making sense of the chaos. What happens is that it uses something called a “kernel function.” Think of this as a magic trick to transform your data into another dimension so it can easily separate different categories. If you had to visualize it, imagine pushing and stretching the space where your clothes hang so that t-shirts and jeans are on opposite sides—all neat and tidy!

I remember once playing around with some messy data from my old job. My task was to categorize feedback from customers about our products—some loved them, others… not so much. Initially, I was at my wit’s end until I stumbled upon RBF SVM. With just a few tweaks here and there in my code, bam! Suddenly I could see clear patterns emerging from all that noise, like those adorable shirts on one side and those funky pants on the other.

But hey, let’s not sugarcoat this whole deal too much; it’s not always sunshine and rainbows. Sometimes choosing the right parameters can feel like trying to find the right key for a lock that doesn’t even fit perfectly! And yeah, if you’ve got too much data or it’s noisy (like people throwing random clothes into your closet), even an RBF SVM can struggle.

Yet what I love about this approach is its adaptability; whether you’re dealing with binary classes or multiple ones, the RBF SVM flexes its muscles pretty well across various scenarios. Wanna classify emails as spam or not? Check! Need to identify different species based on measurements? You bet!

In short—and without getting overly fancy—RBF SVM is one powerful tool in our arsenal for tackling data classification challenges. It’s fascinating how mathematics transforms real-life problems into something manageable and understandable. So next time you’re faced with heaps of disorganized information remember: there are tools out there ready to help make sense out of chaos!