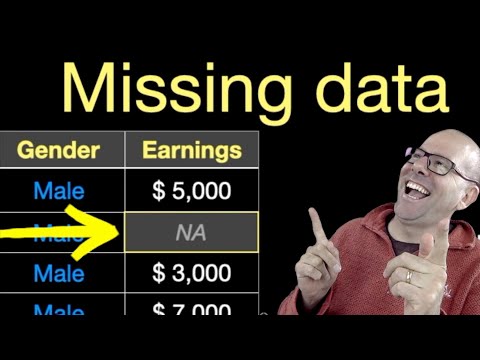

So, picture this: you’re working on this super exciting research project, and everything’s going great. You’ve got your hypothesis, your data collection all lined up, and then bam! Half your data is MIA. Seriously, it feels like the universe just pulled a prank on you.

Missing data happens to the best of us. It’s like that one sock that always disappears in the laundry—you swear it was right there! You start scratching your head, wondering how to deal with those pesky gaps in your research.

But here’s the kicker. It doesn’t have to be the end of the world. Navigating missing data can actually teach you a lot about what you’re studying. Sounds confusing? Don’t worry—it’s not as scary as it sounds. Buckle up; we’re diving into how to handle those gaps like a pro!

Strategies for Addressing Missing Data in Scientific Research Methods: A Comprehensive Guide (PDF)

So, missing data in research can be a bit of a puzzle, right? You collect all this information, and then—bam!—some parts just aren’t there. It’s like trying to complete a jigsaw puzzle but losing a few pieces along the way. Let’s chat about some strategies that researchers can use to tackle this issue.

First off, understand the types of missing data. There are three big categories here:

- Missing Completely At Random (MCAR): This occurs when the missingness is random. For example, if someone drops out of a study due to an unrelated reason, that’s MCAR.

- Missing At Random (MAR): In this case, the missingness is related to other observed variables but not the missing data itself. Imagine you’re studying how exercise affects weight loss. If younger participants tend to skip sessions more often than older ones, but age is still recorded, that’s MAR.

- Missing Not At Random (MNAR): Here’s the tricky one. It happens when the reason for missing data is related to what’s missing itself. For instance, people with higher weights might be less likely to report their weight.

Next up is imputation. This fancy word means filling in those gaps with estimated values. There are different methods for doing this:

- Mean/Median Imputation: You can just fill in missing values with the average or median of that data set. Easy peasy! But it can reduce variability.

- K-Nearest Neighbors (KNN): This technique looks at other similar data points and uses them to guess what’s missing. It’s like finding friends who have similar interests!

- Multiple Imputation: Instead of guessing once, you create several different datasets where you fill in values differently each time and then combine results. It’s kind of like taking multiple shots at a target!

Another strategy involves sensitivity analysis. This means testing how your results change based on different ways you’ve handled the missed data. Look at it as trying on different outfits before deciding what works best for you.

Don’t forget about using models that handle missing data directly! Some statistical techniques, like certain regression methods or structural equation modeling, can use cases even when there are some gaps in their data.

Finally, communication matters. Transparency about how you dealt with missing information should be crystal clear in your research reports. You’re telling a story with your findings; don’t leave out important chapters because they’re difficult!

To wrap things up: dealing with missing data isn’t exactly fun or easy—it can feel frustrating! But by understanding types of missingness and employing various strategies like imputation or sensitivity analysis, you can manage these challenges effectively and keep your research solid.

Just remember: every piece of your scientific story counts!

Strategies for Addressing Missing Data in Scientific Research Methodologies: A Practical Example

So, missing data. It’s like that frustrating puzzle piece that just doesn’t show up, right? You’re working on a project, and suddenly you realize some info is MIA. This can really mess with your research. But don’t worry! There are ways to tackle this head-on.

First off, let’s chat about the types of missing data you might come across. Basically, there are three types:

- Missing Completely at Random (MCAR): This means the missing data is random and not related to any other data points. Like if someone misplaced a survey without any bias.

- Missing at Random (MAR): Here, the missing values depend on other available information. Imagine a study where younger participants skipped questions because they felt embarrassed, but older ones answered everything.

- Not Missing at Random (NMAR): In this case, the reason for the missing data is related to the missing values themselves. Like if people with high incomes just don’t disclose their earnings.

Now that we know the types, let’s talk strategies for handling this issue:

One classic method is **imputation**. This basically means filling in the gaps with estimates based on other data you have. Say you’re studying students’ test scores but some didn’t take a test—maybe because they were sick or something. You could look at their past scores or even their classmates’ results to guess what they might have scored.

Another approach is using **multiple imputation**. It’s kind of like taking several educated guesses instead of just one poke in the dark. So you would fill in those gaps multiple times, creating several complete datasets and analyzing them separately before combining results for better accuracy.

But what if you can’t fill in those gaps? Consider using a method called **maximum likelihood estimation** (MLE). It estimates parameters by finding values that make your observed data more likely given a statistical model—basically letting your data do most of the heavy lifting when it comes to figuring things out!

And here’s another thought: **weighting**! You can assign weights to your responses based on how likely different groups are to respond or not respond. For example, if younger people tend to skip questions more often than older folks in a survey, you adjust their influence accordingly when analyzing results.

What’s super important is keeping track of why data’s gone AWOL and being transparent about it in your research report! Don’t leave anyone guessing; be clear about how you’ve handled those pesky holes.

Just remember—a practical example can really clarify these ideas! Let’s say researchers are looking into dietary habits among teens but find out that boys were less likely than girls to respond to part of a food frequency questionnaire. Recognizing this boy-girl response gap and using strategies like weighting could help paint a better picture when assessing overall teen eating habits.

So yeah, tackling missing data isn’t fun but dealing with it thoughtfully can lead you down a path of clearer insights and better conclusions down the road!

Addressing Missing Data Issues in Scientific Research: Concepts and Methodologies for Effective Analysis

Missing data issues in scientific research can be like trying to find your way in the dark with only one flickering light. You know there’s something important you’re missing, and it can make a real mess of your analysis. But don’t sweat it! There are ways to tackle this problem.

First off, let’s talk about why missing data happens. It’s not always someone’s fault. Sometimes participants drop out of studies, or maybe some information just wasn’t collected properly. This lack can lead to bias or untrustworthy results, which is not ideal at all.

Now, when it comes to addressing these gaps, researchers have a couple of strategies they can use. Here are some main methodologies:

- Complete Case Analysis: This method involves only using the data from participants who have no missing values at all. While it sounds simple, it might lead to losing a lot of valuable information and can introduce bias.

- Mean Imputation: In this approach, you replace missing values with the average of observed values for that variable. It’s easy but can underestimate variability and skew results.

- Regression Imputation: Here, you predict missing values based on other available data through regression analysis. It uses relationships between variables but assumes those relationships hold true across all observations.

- Multiple Imputation: This technique creates multiple versions of the dataset by replacing missing values multiple times, then averages the results later. It’s like guessing several times instead of just once and often gives more reliable estimates.

So what does this mean for you? If you’re conducting research and find yourself knee-deep in missing data, choosing the right method is crucial for making sure your findings are sound.

Let me share a quick story here: a friend of mine was working on a health study that involved tracking people over time. Some participants dropped out for various reasons—life happens! Initially, they used complete case analysis and ended up with only half their sample size! That made their findings less robust since they were ignoring a lot of useful information just because some folks weren’t around anymore.

The aftermath was eye-opening for my buddy because they switched to multiple imputation. The results? Much better insights into health trends among different demographics with far less bias than before!

Overall, it’s super important to choose your approach wisely when you’re dealing with missing data in research! Being aware of these methodologies is key—you don’t want those gaps throwing off your whole study or worse yet, leading others down the wrong path with their conclusions.

So remember: there are plenty of fish in the sea when it comes to dealing with data gaps; explore those methodologies carefully!

You know, missing data can feel like that awkward silence at a party where everyone’s looking around, unsure of what to say. It’s a bit uncomfortable, right? In scientific research, missing data is kind of like that—it can complicate things and leave researchers scratching their heads.

Let me share a little story to highlight this. A while back, I was part of a research project that aimed to study the effects of sleep on anxiety levels in students. We had everything planned out—surveys, interviews, and even some fancy sleep tracking gadgets. But guess what? Some students just didn’t fill out the surveys completely. Others forgot to wear the gadgets some nights. Talk about a headache! We ended up with a chunk of our data missing, and it was stressful trying to figure out what to do.

So, what do researchers do in these situations? They have a few tricks up their sleeves. One common approach is to use statistical methods to handle the missing bits. There are techniques like imputation, which is basically filling in those gaps with estimates based on existing data. It’s kind of like playing detective—trying to piece together the puzzle without having all the pieces! But here’s the catch: if you’re not careful about how you estimate those gaps, you could end up skewing your results pretty seriously.

Another thing that comes into play is transparency. Researchers often have to balance between making sure their findings are robust while also being honest about their limitations—like when they have to admit that some data is just… gone. That openness can be crucial for others who might want to build on those findings later.

Sometimes it’s also about reflecting on why the data went missing in the first place. Maybe participants were overwhelmed or didn’t understand certain questions? Understanding these reasons can help shape better studies down the line.

In short, navigating through missing data isn’t easy—it involves a mix of analytical thinking and a good dose of humility. You’ve got to be willing to adapt and learn from those unexpected twists and turns along the way because science is all about uncertainty, right? So no matter how frustrating those gaps can be, they often lead us into new questions worth exploring!