Imagine you’re at a dinner party, right? Someone casually mentions they think pineapple on pizza is the best topping ever. You roll your eyes and say, “No way, pepperoni rocks!” Then someone chimes in with a stat about how many people actually prefer pineapple. That’s kinda like what T tests do in science.

You see, T tests are these nifty little tools that help researchers figure out if two things are really different or if it’s just random noise. Like, is there actually a significant difference between two groups? Or are you just getting your wires crossed like at that dinner party?

Getting good at T tests can seriously up your research game. It’s all about making sense of data and drawing solid conclusions—no guesswork needed! So, let’s dig into why they’re kind of a big deal in scientific research. Sound good?

The Crucial Role of T-Tests in Scientific Research: Understanding Statistical Significance and Data Analysis

So, let’s chat about T-tests, shall we? You know, those nifty little statistical tools that help researchers figure out if their findings are legit or just random noise. Seriously, they’re kinda the backbone of data analysis in scientific research.

What’s a T-test? Well, it’s a statistical test used to compare the means of two groups. Imagine you want to see if a new medication actually helps lower blood pressure compared to a placebo. A T-test can tell you whether the difference in blood pressure between these two groups (the ones taking the medication and the ones taking the placebo) is significant or if it just happened by chance.

Now, there are a few types of T-tests out there:

- Independent T-test: Used when comparing two different groups. Like if you wanted to compare test scores between two classes.

- Paired T-test: This one’s for when you’re dealing with the same group at different times. Think before-and-after scenarios.

- One-sample T-test: This compares the mean of one group against a known value. For example, checking if the average height of students in your school is different from the national average.

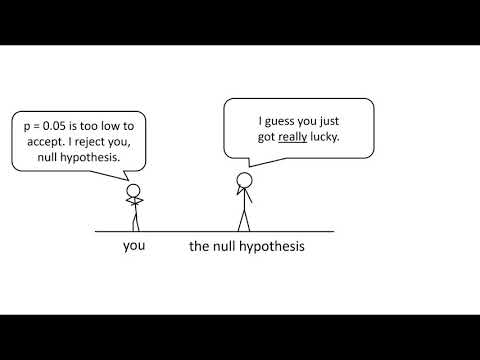

One thing that really blows my mind is how essential these tests are for establishing statistical significance. Basically, when scientists run experiments, they want to make sure what they found isn’t just luck—like finding a $20 bill on the sidewalk that one time! A common threshold for significance is p < 0.05. If your p-value falls below this number after running a T-test, it means there’s less than a 5% chance that your results happened randomly. That’s pretty solid evidence!

Let me tell you about my friend who studies sleep patterns. She once did an experiment comparing how well people sleep with and without their phones in bed. After collecting data and running a paired T-test, she discovered that folks slept significantly better without their phones—like, who would’ve thought? This type of analysis helped her show that maybe we should all consider ditching our devices before hitting snooze.

But here’s where things get tricky: Misusing or misinterpreting T-tests can lead to some misleading conclusions! So it’s super important to make sure your data meets certain assumptions—like normality (which means our data should roughly follow a bell curve) and homogeneity of variance (where both groups have similar variances). If these aren’t met? Well, it could skew your results.

Also, don’t forget about sample size! Small samples can lead to unreliable results because they might not represent the entire population accurately. If you’re testing something with only five people in each group? You might want to think twice; it could be like trying to gauge everyone’s favorite pizza topping by asking just your family.

So yeah, in summary: T-tests are super valuable for scientists trying decipher their data and draw meaningful conclusions from experiments! They help us move from guesswork to knowledge with solid statistical backing. And given how much research shapes our world—from medicines we take to policies we support—it really drives home how important getting this right is!

Understanding the Purpose of the T-Test in Scientific Research and Data Analysis

So, the t-test! It might sound a bit intimidating at first, but here’s the deal: it’s actually a super handy statistical tool that scientists use to figure out if there are significant differences between two groups. Like, if you’ve got one group of people who did yoga and another who didn’t, you want to know if their stress levels are really different or if it’s just random chance. You follow me?

First off, there are a few different types of t-tests. Each one has its own purpose—but they’re all about comparing means. Let’s break them down a bit:

- Independent Samples T-Test: This is like comparing apples and oranges, but in the right context! Imagine you have two separate groups—one gets a new teaching method while the other sticks with the old way. Here’s where the t-test steps in to see if the average outcomes differ enough to warrant attention.

- Paired Samples T-Test: Picture this: you test the same group twice—maybe before and after some intervention. This test checks if those changes are statistically significant around that average score of your group.

- One-Sample T-Test: Now this one’s simpler—you’re looking at just one group against a known value. Like testing whether your class averages differ from last year’s exams scores.

Now, why should we care? Well, data analysis isn’t just number crunching; it’s about making sense of what those numbers mean for real-life situations. For instance, let’s say researchers look into whether a new medication helps reduce symptoms of anxiety more than an existing one. They’d use a t-test to analyze symptom scores from both groups and let us know if that new med is actually worth considering.

But here’s where it gets interesting! When you run a t-test, you’re not just spitting out numbers; you’re assessing “significance.” In statistical lingo, when something is “significant,” it basically means you can be pretty darn sure that what you’ve found isn’t due to random chance—but rather something real happening in your data.

Now let me tell you about something I noticed during college. I had this stats professor who said every analysis needs context—true story! He showed us how two datasets could look similar on paper but when we ran tests like t-tests with proper interpretations? Boom! We discovered key differences that influenced real-world outcomes in healthcare policies. It was eye-opening!

To sum up (well, sort of), the t-test is crucial for making those informed decisions based on data. Whether you’re looking at how well students perform with different teaching methods or evaluating medical treatments—this test helps unveil what’s worth digging deeper into or maybe even changing altogether.

So next time someone tosses around terms like “t-test,” remember: it’s all about understanding whether those differences we see are meaningful or just coincidental blips on our radar!

Interpreting Statistically Significant t-Test Results in Scientific Research: Conclusions and Implications

The t-test is like the secret handshake of statistics in scientific research. It helps us figure out if the difference between two groups is real or just a fluke. When you see those *p-values*, it’s like getting the VIP pass to understanding your data.

First off, let’s tackle what that “statistically significant” label means. If you run a t-test and get a p-value lower than 0.05, it basically signals that there’s only a 5% chance that the results happened by random chance. So, in simple terms, yes, you’ve got some solid evidence that there’s an actual difference between your groups.

One key point to remember is that statistical significance doesn’t always mean practical significance. Just because your test shows something significant doesn’t automatically make it super important in real life. For example, if you’re studying how two different diets affect weight loss and find a tiny difference in pounds lost between them, it might not change how people eat every day.

Interpreting t-test outcomes also requires looking at confidence intervals. These intervals give you a range where you can expect the true effect to lie if you repeated the study multiple times. A narrow interval shows precision; a wider one? Well, maybe not so much clarity there.

Oh, and let’s not forget about sample sizes! A small group sometimes leads to unusually high or low p-values just because of randomness or variability. Larger samples tend to smoothen these bumps out and give you more reliable insights about your population—kind of like having more friends means spreading out the drama!

Another important aspect is understanding the assumptions behind t-tests:

- Normality: Your data should be roughly normally distributed.

- Independence: The samples being tested need to be independent from each other.

- Homogeneity of variance: The variances in both groups should be similar.

If these assumptions don’t hold up? Well, your results might not be as trustworthy as you’d hope.

So why are t-tests so crucial? They help researchers make informed decisions based on data rather than gut feelings or guesswork. For example, let’s say you’re testing a new drug against a placebo; using t-tests helps confirm whether the drug does more than just making people think it does.

In summary—applying and interpreting t-tests correctly gives researchers powerful tools for drawing reliable conclusions from their data. It can pave the way for future studies and innovations by providing clear evidence whether treatments work or if further investigation is needed.

But always keep in mind: stats can tell us things but they don’t give us all the answers. The implications of findings are often broad and deeply intertwined with context—like how those differences play out in real-world scenarios! So while numbers are great companions on this journey of discovery, they’re just part of the story we’re trying to tell through research.

You know, when you start digging into the world of scientific research, you hit some pretty interesting stuff. One of those things is the T test. It sounds so formal and intimidating, right? But it’s really just a tool that helps researchers figure out if two groups are different from one another. Imagine you’re at a party and you’re trying to see if people prefer pizza over tacos. You gather a group of friends and ask them what they like more. If you find that more people choose pizza, cool! But how do you know if that’s just a coincidence or if pizza is actually the winner? That’s where the T test comes in.

Let’s say I remember this one time back in college when my buddy Sam made a bet about whether studying with music was better than studying in silence. We gathered our group, split into two teams—one with tunes blasting and the other all zen and quiet. After cramming for finals, we took our tests and compared scores. Just like that party game, we wanted to know if the music really helped us study better or not.

The T test helped us see if there was enough evidence to back Sam’s theory or not. It takes into account how much variation there is in our scores and helps determine whether any difference we noticed was real or just random chance messing with us. This little tool packs quite a punch in research!

Using T tests can make results feel more legit. If they show there’s a significant difference between two groups—like those who studied with music versus those who didn’t—it gives researchers confidence to say, “Hey! This isn’t just fluke data.” It’s like having that friend who always calls it like it is; you trust their opinion because they back it up with solid evidence.

But there’s something kind of human about it too. Think about how often people jump to conclusions without actually analyzing information properly! The T test reminds us—all researchers are human—each study needs thorough examination before declaring any wild claims.

In scientific circles, this kind of reasoning makes your findings stronger and helps avoid misunderstandings down the line. Plus, it opens up conversations for others to explore similar questions or challenges! Just picture all those students getting together after their final exams comparing notes again—now they can use T tests to see who really studied better!

So yeah, while it may sound all mathy and technical at first glance, T tests are incredibly useful tools behind many scientific discoveries. They help us get closer to the truth by pinning down differences between groups in a reliable way—and we could all use that clarity now and then!