You know that feeling when you’re at a party, and someone brings up the whole “chicken or the egg” debate? Like, it’s funny how people get really into that stuff. Well, in science, we’ve got our own version of that – it’s called hypothesis testing.

Imagine this: you’re convinced your dog understands you when you talk to him. You tell him “sit” and he does! But how do you prove it? That’s where hypothesis testing comes in. It’s like a game where you toss ideas around to see if they can hold water.

In science, this kind of testing is everything! The way scientists tackle it can be pretty different too, which adds another layer of fun. Some like to go by the book while others are more laid-back about it. So let’s dig into these approaches and see what they’re all about!

Exploring Approaches to Hypothesis Testing in Scientific Research: A Comprehensive Overview

Sure thing! Let’s chat about hypothesis testing in science. It’s kind of a big deal because it helps researchers figure out whether their ideas hold water.

So, what is hypothesis testing? Well, imagine you have a theory that says “the sun rises in the east.” To test this hypothesis, you’d gather evidence, like watching the sunrise every morning. If that pattern holds true consistently, your hypothesis gets a thumbs up! If not? Well, maybe it needs some tweaking.

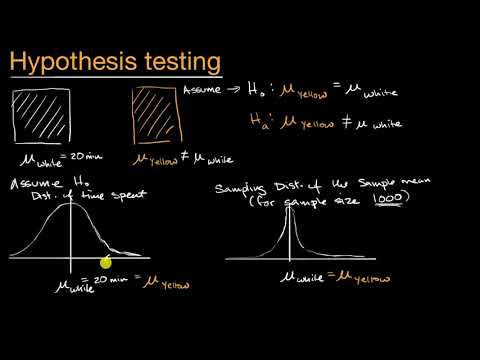

When scientists design experiments or studies, they usually start with two types of hypotheses: the null hypothesis (H0) and the alternative hypothesis (H1). The null hypothesis is basically saying “there’s no effect” or “nothing special is going on,” while the alternative is saying “Hey! Something is up here!”

To test these hypotheses, researchers use various approaches:

- Frequentist Approach: This is like the classic method. You gather your data and calculate p-values to see if your results are statistically significant. If your p-value is low (typically less than 0.05), you reject the null hypothesis.

- Bayesian Approach: This one’s pretty cool because it incorporates prior knowledge and evidence into the testing process. Instead of just looking at p-values, you get to update your beliefs about your hypotheses based on new data.

- Likelihood Ratio Tests: Here, you compare how likely your data would be under both hypotheses (null and alternative). It’s kind of like weighing two competing ideas to see which one makes more sense given what you’ve observed.

- Permutation Tests: These are super helpful when traditional methods don’t fit well. You shuffle your data around to create a distribution of results under the null hypothesis and see where your actual result lands.

Now here’s where things can get tricky. Sometimes researchers might face what’s called a “type I error,” which means they mistakenly reject a true null hypothesis—basically saying there’s an effect when there isn’t one. Oops! On the flip side, a “type II error” happens when they don’t reject a false null—missing out on an effect that actually exists.

It reminds me of this time in high school science class when we were doing an experiment with plants under different light conditions. We thought plants would grow better in sunlight rather than darkness but ended up getting some funky results due to unexpected variables like soil quality. Our original idea got challenged big time!

The bottom line? Different approaches to hypothesis testing give scientists tools to navigate uncertainty in their research findings and refine their theories over time. It keeps science buzzing along because new evidence can reshape our understanding!

Exploring the Four Essential Methods for Hypothesis Testing in Scientific Research

When we’re digging into scientific research, one of the big deals is figuring out if our assumptions about how things work are actually true. This is where hypothesis testing comes into play, helping us make sense of all the messy data we collect. So, let’s break down four essential methods for that, alright?

- Null Hypothesis Significance Testing (NHST): This is probably the most common method you’ll run across. The idea here is simple: you start with a null hypothesis, which basically says there’s no effect or no difference. Then, you collect your data and perform statistical tests to see if you can reject that null hypothesis. If your p-value (a number that helps determine significance) is lower than a certain threshold—usually 0.05—it suggests your findings might be significant.

- Confidence Intervals: Instead of just looking at p-values, this method gives you a range of values that likely contain the true effect. For example, if you’re measuring how much kids grow after a special diet and find a confidence interval from 2 to 5 cm, it suggests you can be fairly confident (like 95% sure) that kids are growing between those two centimeters after the diet. It’s pretty nifty because it shows not just whether an effect exists but how big it might be.

- BAYESIAN HYPOTHESIS TESTING: This one is a bit different from NHST and focuses on updating your beliefs based on evidence. Imagine you think a new teaching method might work better than an old one. You’d start with a prior belief about its effectiveness and then gather data to update that belief. The cool part? It gives you probabilities rather than just “yes or no” answers, so it feels more intuitive for some folks.

- Effect Size Assessment: While p-values tell us if something’s statistically significant, they don’t say much about *how important* those findings are in real life. That’s where effect size comes in! It quantifies the strength of a relationship or difference in your data—like saying diet A made kids grow an average of 4 cm more than diet B—not just whether that difference exists.

When I first learned about these methods in class, I remember feeling totally overwhelmed by all the jargon and numbers flying around. But once I got into real experiments—and started analyzing my own data—the light bulb went on! Each method has its own strengths, right? Like NHST is super useful for making quick decisions, while Bayesian testing feels like having an ongoing conversation with your data.

So yeah, every research project might benefit from using a combination of these methods to get a fuller picture of what’s going on under the surface! When scientists dig deep like this, there’s often so much more happening than meets the eye.

Exploring the Various Types of Hypothesis Tests in Scientific Research

So, you want to chat about hypothesis tests? Cool! Hypothesis testing is like a way for scientists to figure out if their ideas about the world hold water. Basically, you start with a guess, called a hypothesis, and then you test it using data to see if it’s true or not. Let’s break this down in a simple way.

The Null and Alternative Hypothesis

When we talk about hypothesis testing, two key players are the **null hypothesis (H0)** and the **alternative hypothesis (H1)**. The null is basically your starting point—it says there’s no effect or difference. Think of it like saying “the new coffee blend doesn’t taste any different from the regular.” The alternative, on the other hand, is what you’re usually hoping to prove—like “this coffee does taste better.”

Types of Tests

There are various kinds of tests scientists can use depending on what they’re looking at. Here’s a quick rundown:

- Z-tests: Used when you know the population variance and your sample size is large (typically over 30). Imagine flipping coins—if you have lots of them, you can predict how many heads you’ll get.

- T-tests: Perfect for smaller sample sizes or when you don’t know that population variance. It compares means—like checking if students who study with music score differently than those who don’t.

- Chi-square tests: These are for categorical data—like figuring out whether there’s an association between two things. Think of checking if people prefer coffee over tea based on age groups.

- ANOVA (Analysis of Variance): This one’s useful if you’re comparing means across three or more groups. Say you want to check if studying methods affect exam scores across multiple classes.

- Mann-Whitney U test: This is a non-parametric test that compares differences between two independent groups when your data isn’t normally distributed. Kind of like judging which team had better performance in sports based on rankings rather than scores.

P-Values and Significance Levels

Now, once you’ve done your test, you’ll end up with something called a p-value. It tells you how likely your results would happen if the null hypothesis were true. If your p-value is less than 0.05—this is commonly used—you’re basically saying your results are statistically significant! You have enough evidence to think there might be something more going on.

You know what gets me? Sometimes people take p-values too seriously without understanding what they really mean. Like just because it’s under 0.05 doesn’t automatically make something true; it just suggests that it’s unlikely due to random chance alone.

The Importance of Context

It’s super important to keep context in mind when deciding what test to use or interpreting results! Things like sample size, variability among samples, and even how the data was collected can dramatically influence outcomes.

And let me tell ya—a bad choice in the type of test or misinterpreting p-values can lead researchers down some pretty wild paths that end up being misleading!

In short, different approaches within hypothesis testing help scientists take their guesses about the world and back them up with actual evidence from data collection and analysis. It’s all about finding out what’s real out there—or at least giving us a better shot at understanding it! So next time you’re sipping that new brew or reading about studies online, remember—it all comes down to these methodical checks that give research its credibility!

So, you’ve probably heard about hypothesis testing, right? It’s like the backbone of scientific research—the way we figure out if our ideas about how things work are on point or totally off-base. But let’s get real for a sec: not all scientists agree on how to go about it.

I remember sitting in a college lecture when my professor threw out two different approaches: frequentist and Bayesian. It was like watching two passionate sports fans argue over which team is better—you could feel the tension in the room! Frequentists focus on long-run frequencies of events. They’re all about p-values and confidence intervals, which sound super technical but basically help to determine if your results are statistically significant or just random chance. You know those classic “reject or fail to reject” decisions? That’s them.

On the other hand, there’s Bayes’ side of the world, which is kind of like the cool rebel at a party who doesn’t care for tradition. They use prior knowledge and update probabilities based on new evidence—a more dynamic approach, if you will. Imagine you’re tuning into a series finale of your favorite show—the first season gave you some clues, but each episode shifts your understanding of the plot as you get more information. That’s Bayes in action!

Each method has its fans and critics. Some folks argue that frequentist methods can be too rigid—too black-and-white in a world where science feels more gray sometimes. Meanwhile, others say Bayesian methods can become subjective because they rely on personal beliefs before even starting an experiment. It’s kind of like trying to pick a movie: some people love horror flicks while others swear by rom-coms—it really just depends.

But here’s what strikes me as important: no matter which camp you’re in, these approaches aim for one thing—understanding reality better and making sense of our universe, piece by piece. So next time you’re caught up in some debate over data analysis or research findings, just remember: everyone is trying to crack life’s mysteries using their own toolkit! And maybe grab some popcorn while you’re at it; scientific discussions can get pretty intense!